70% of modern SaaS runs multi-tenant on Postgres RLS. The other 30% have a reason. Pool, silo, bridge — when each wins, with the AWS SaaS Lens vocabulary and copy-paste-ready RLS code.

By Vishvajit Pathak, Co-Founder, MarsDevs. Published April 30, 2026.

TL;DR: Default to multi-tenant with Postgres Row-Level Security. Switch to single-tenant only when a contract or compliance regime (HIPAA BAA per customer, PCI Level 1, sovereign data residency) requires it, or when one tenant's workload justifies dedicated infrastructure. We have shipped both models across SaaS builds in B2B analytics, fintech, healthcare, marketplace, and internal-ops since 2019. The hybrid bridge model (pooled by default, siloed for enterprise) is what 2026 SaaS startups actually run. Decision matrix and migration playbook below.

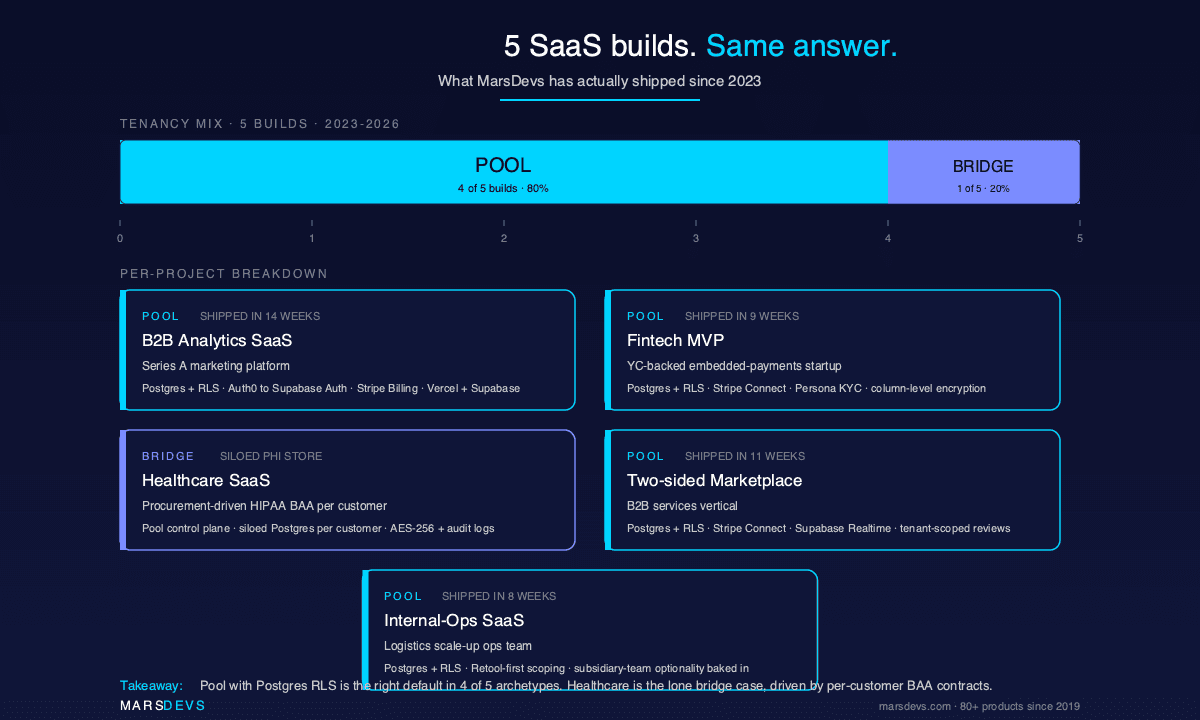

If you are picking a SaaS tenancy model on day one, pick multi-tenant pooled with Postgres Row-Level Security and plan a bridge migration for your future enterprise tier. About 70% of modern SaaS now runs on some form of multi-tenancy, and the cost economics get worse, not better, the longer you wait to commit. We have shipped 5 SaaS builds since 2023 across B2B analytics, fintech, healthcare, marketplace, and internal-ops. Four of five ran on a pooled Postgres model with tenant_id plus RLS from day one.

This is an opinionated default, not a survey of options. The reasoning is simple. Multi-tenant pooled gives you the lowest base infrastructure cost, the simplest deploy pipeline, and the smallest per-tenant operational overhead. The tradeoffs (noisy neighbor risk, weaker blast-radius isolation, more careful schema design) are real, but they are mitigatable with code. The tradeoffs of going single-tenant on day one (linear cost growth, N-times schema migrations, parallel ops surfaces) are mitigatable only with money.

The right time to break the default is when a paying customer makes you, not before. Healthcare prospects asking for per-customer BAAs. Fintech enterprise plans citing PCI Level 1. EU customers citing GDPR data residency. Those are the moments when the bridge model wins: keep the pooled tier serving 95% of your revenue and silo only the customers who pay for it. We say more about that in the real cost of our last 5 SaaS builds, where 4 of 5 archetypes ran pooled and only the healthcare build needed siloed PHI infrastructure.

The rest of this guide names the three models the way the AWS SaaS Lens names them (silo, pool, bridge), shows the actual SQL for tenant isolation in Postgres, lays out a stage-by-industry decision matrix, and walks through the migration playbook for promoting a single tenant out of the pool when an enterprise contract demands it.

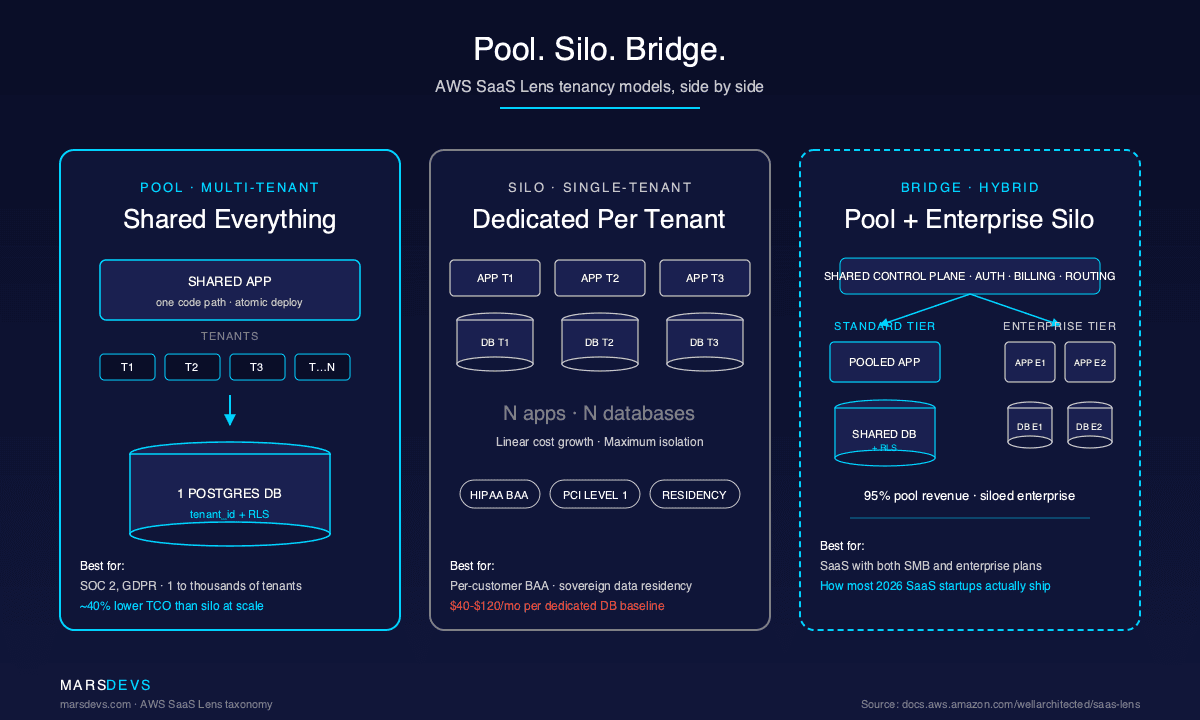

A multi-tenant or pooled SaaS runs all customer data inside one shared application stack and one shared database, with a tenant_id column (or organization ID, or workspace ID) on every row that scopes data to a single customer. This is the AWS SaaS Lens "pool" model. It is the cheapest, fastest, and most operable tenancy pattern for early-stage SaaS.

Pool model wins on three axes. Infrastructure cost is flat as you add customers, because every new tenant adds rows, not servers. Deploys are atomic, because you are pushing one schema and one code path. Observability is unified, because every metric, log, and trace lives in one place. The result is a SaaS that scales to thousands of small customers on a single Postgres primary plus a couple of read replicas.

Cost economics are the headline reason it dominates. Multi-tenant lowers total cost of ownership by roughly 40% compared to silo at the same scale, per Future Processing's 2026 multi-tenant guide. The reason is structural. In a pool model your fixed infrastructure cost (one DB primary, one app cluster, one observability stack) amortizes across every paying customer. Adding tenant 1,000 costs almost nothing. In silo, adding tenant 1,000 means provisioning a 1,000th database, a 1,000th deploy target, and a 1,000th set of credentials.

The ceiling on the pool model is not technical. Postgres 16 with RLS, indexes on tenant_id, and pgbouncer connection pooling will hold thousands of tenants in one database without breaking a sweat. The ceiling is contractual. The day a customer's procurement team writes "dedicated environment" or "physically isolated database" into their contract, the pool model stops being legal for that one tenant. That is when the bridge model earns its keep.

| Pool model fact | Why it matters |

|---|---|

One app, one DB, tenant_id per row | Lowest infra cost. Atomic deploys. Unified observability. |

| RLS enforces isolation at the database, not the app | A bug in your application code cannot leak cross-tenant data if the policy is correct. |

| Adding tenant 1,000 costs near zero | Fixed cost amortizes across every tenant. TCO ~40% lower than silo at scale. |

| Noisy neighbor is the canonical failure mode | One tenant's heavy job degrades performance for the rest. Mitigated with rate limits, sharding, replicas. |

A single-tenant or siloed SaaS provisions a dedicated application stack and a dedicated database for each customer. This is the AWS SaaS Lens "silo" model. It wins in exactly four situations: a HIPAA BAA that the customer wants written per environment, PCI Level 1 controls, a sovereign data residency clause (EU-only, India DPDP, Australia, GovCloud), or a contract that explicitly names "dedicated infrastructure" as a deliverable.

Silo gives you the strongest possible isolation. The blast radius of a bad deploy, a runaway query, or a security incident is one customer, not all of them. Compliance audits get easier, because every control surfaces at the per-tenant level. Encryption keys can be customer-managed (CMK / BYOK via AWS KMS or GCP Cloud KMS) without complicating other tenants. For a healthcare scale-up shipping PHI, this is often the only architecture the compliance officer signs off on.

The tradeoff is cost. Silo grows linearly with tenant count. Every customer adds one Postgres instance, one app deployment slot, one set of secrets, one CI pipeline target, one observability footprint. At 50 tenants that is manageable. At 500 it consumes a full-time SRE. The math is not subtle: a dedicated managed Postgres on Aurora Serverless v2, RDS, Neon, or Supabase Pro typically lands in the $40 to $120 per-tenant per-month baseline before compute or storage, sourced from each provider's public pricing pages. Multiply by 500 customers and you are paying $20,000 to $60,000 per month in idle database fees alone.

Silo is honest in two cases and dishonest in a third. It is honest when your average contract value is high (six figures plus) and per-tenant infra is a rounding error. It is honest when a regulator or customer contract makes the pool model illegal for that segment. It is dishonest when a founder picks silo on day one "for security" without the revenue to justify it. We have seen pre-seed founders burn $4K to $8K a month on idle infrastructure for 30 trial customers, none of whom needed isolation that strong. Pick silo when a paying customer makes you, not because it sounds safer.

The bridge model, also from the AWS SaaS Lens, is a hybrid where standard-tier customers share pooled infrastructure and enterprise-tier customers get their own siloed environment. This is the architecture most SaaS startups end up running by year two, even when they started pure pool, because it is the only way to capture both segments without rebuilding from scratch.

Here is what bridge looks like in practice. Your free, starter, and growth plans live on a pooled Postgres with RLS. Your enterprise plan provisions a dedicated database (and sometimes a dedicated app cluster) per customer, billed at a higher tier that covers the infrastructure cost. The control plane (auth, billing, tenant routing) is shared. The data plane (the actual customer data and compute) splits between pool and silo based on plan.

The bridge model wins because it lets you sell to two markets with one product. SMB customers get the cheap, fast, pooled experience. Enterprise customers get the isolation their procurement and compliance teams demand. You charge enterprise enough to cover the dedicated infrastructure plus a margin. This is how Atlassian, GitHub Enterprise, Slack Enterprise Grid, and roughly every large B2B SaaS converged after they hit enterprise sales.

The risk in bridge is operational. You now run two architectures in parallel, and the temptation is to special-case enterprise tenants in your application code. Resist that. The right pattern is: same code path, same schema, different tenant routing layer. The control plane reads the tenant's plan from a metadata table and resolves their database connection string at request time. From the application's perspective, every tenant looks the same. Only the connection pool knows there are two tiers underneath.

| Model | Cost economics | Isolation strength | Ops complexity | Compliance fit | Blast radius | Scale ceiling |

|---|---|---|---|---|---|---|

| Pool (multi-tenant) | Lowest. Flat fixed cost; ~40% lower TCO than silo at scale. | Logical only. Enforced by RLS or app code. | Lowest. One deploy, one DB, one observability stack. | SOC 2 Type II, GDPR with controls. HIPAA possible with strong RLS plus encryption. | All tenants share fate on a bad deploy or runaway query. | Thousands of tenants on Postgres 16 with RLS plus pgbouncer. |

| Silo (single-tenant) | Highest. Linear with tenant count. $40 to $120/mo per dedicated DB before compute. | Maximum. Physical-level separation. | Highest. N deploys, N DBs, N secrets per release. | HIPAA BAA per tenant, PCI Level 1, sovereign residency. | One tenant. | Limited by ops headcount, not infrastructure. |

| Bridge (hybrid) | Mixed. Pool tier flat; silo tier priced into enterprise plan. | Tiered. Logical for standard, physical for enterprise. | Medium. Two data planes, one control plane. | Pool tier covers SOC 2 / GDPR. Silo tier covers HIPAA / PCI / residency. | Tenant-specific in silo tier, shared in pool tier. | Same as pool plus enterprise count. |

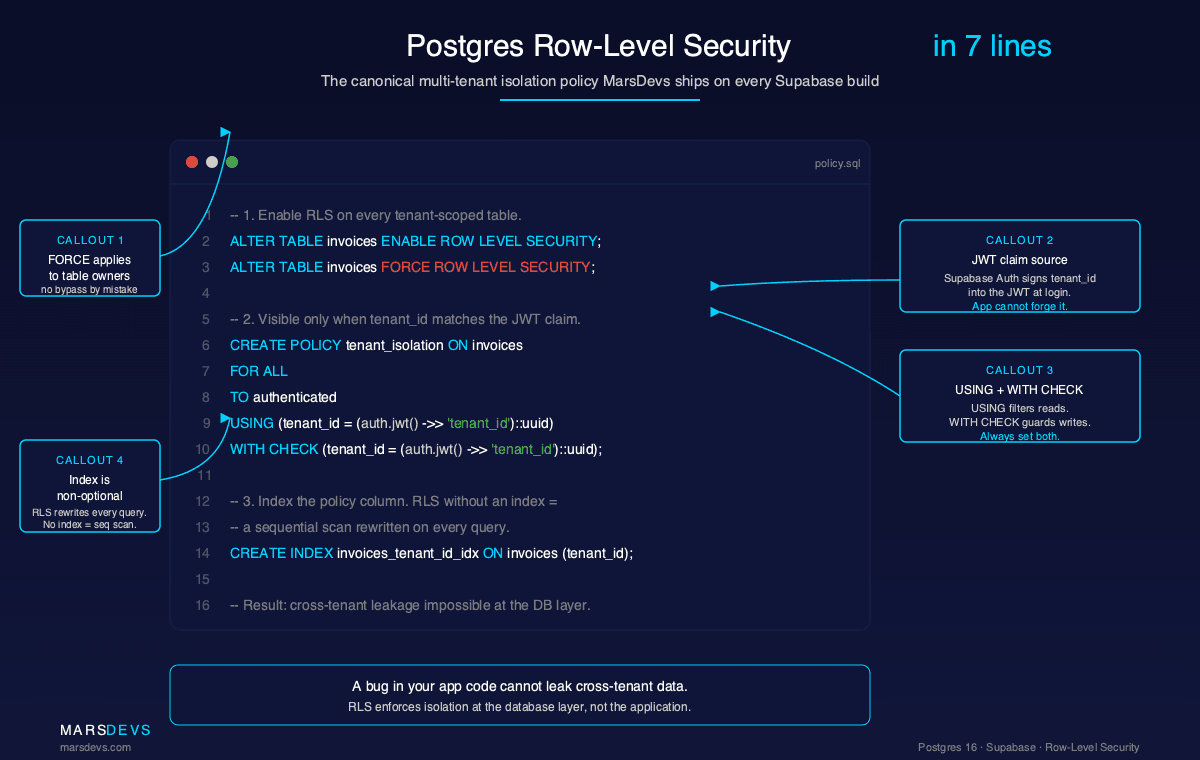

Postgres Row-Level Security (RLS) is non-optional for any Supabase-backed or Postgres-backed multi-tenant SaaS in 2026. RLS enforces tenant isolation at the database, not the application, which means a bug in your API or ORM cannot leak data across tenants if the policy is correct. This is the single most important multi-tenant database design decision after picking Postgres itself.

RLS works by attaching policies to tables that the database checks on every query. The policy reads a value from the connection context (typically a JWT claim like tenant_id or org_id injected by Supabase Auth, Clerk, WorkOS, or Auth0) and filters rows accordingly. Application code never has to remember to add WHERE tenant_id = ?. The database does it. According to the Supabase Row Level Security docs, this is the canonical pattern for any Postgres-backed multi-tenant app.

Here is the minimal RLS policy we apply on every Supabase-backed multi-tenant build:

-- 1. Enable RLS on the table.

ALTER TABLE invoices ENABLE ROW LEVEL SECURITY;

-- 2. Add the policy: a row is visible only when its tenant_id

-- matches the tenant_id claim on the JWT presented to Postgres.

CREATE POLICY tenant_isolation ON invoices

FOR ALL

TO authenticated

USING (tenant_id = (auth.jwt() ->> 'tenant_id')::uuid)

WITH CHECK (tenant_id = (auth.jwt() ->> 'tenant_id')::uuid);

-- 3. Index the policy column. RLS without an index on tenant_id

-- forces the planner to full-scan every query.

CREATE INDEX invoices_tenant_id_idx ON invoices (tenant_id);

-- 4. Optional: deny anon role entirely so unauthenticated requests

-- cannot touch the table even with RLS bypassed by mistake.

REVOKE ALL ON invoices FROM anon;

Three things will go wrong if you skip the supporting work. First, RLS without an index on tenant_id produces full table scans on every query, because the planner cannot push the predicate down efficiently. Second, RLS plus connection pooling gets tricky if you do not use pgbouncer in transaction mode and re-set the JWT claim per transaction. Third, RLS does not protect background jobs running as the service_role, which bypasses policies. For cron jobs and webhooks, scope the tenant explicitly in the SQL or run them as a tenant-scoped role.

The performance question (can Postgres handle thousands of tenants with RLS) has a settled answer in 2026. Yes. With indexes on tenant_id, pgbouncer in transaction pooling mode, and partitioning on tenant_id for the largest tables, a single Postgres 16 primary will hold tens of thousands of small-to-medium tenants. We default to this stack on every B2B SaaS build, and the same pattern shows up in the tech stack we ship 2026 SaaS on.

Database-per-tenant means provisioning a dedicated Postgres instance (or cluster) for every customer. It is the strongest isolation pattern, the most expensive operationally, and the only honest answer for a small set of contracts. Per-tenant baseline cost on managed Postgres typically lands at $40 to $120 per month before compute and storage usage, sourced from each provider's public pricing pages.

The cost is not hypothetical. Adding 100 tenants on database-per-tenant adds 100 databases. The pricing pages for Aurora Serverless v2, RDS Postgres, Neon, and Supabase Pro all start at a per-instance baseline before any actual usage, and that baseline is what kills the math at scale. Neon's branching can soften this for tenants that share a base, and Aurora Serverless v2 ACU pricing scales to near-zero on idle, but neither makes the model free.

| Managed Postgres option | Per-tenant baseline ($/mo, before compute) | Realistic break-point | When it's honest |

|---|---|---|---|

| AWS Aurora Serverless v2 | Starts ~$43/mo at 0.5 ACU minimum (per AWS pricing). Scales up on use. | ~50 active tenants. ACU minimums add up. | Enterprise tier with active workload per customer. |

| AWS RDS Postgres (db.t4g.micro and up) | ~$25 to $90/mo per instance baseline (per AWS pricing). | ~30 tenants. T-class throttling hits. | Mid-market enterprise with predictable load. |

| Neon (autoscaling, scale-to-zero, branching) | Free tier viable for many trials. Pro plans from ~$19/mo (per Neon pricing). | Hundreds of tenants if most are idle. | Per-tenant plans with idle-friendly billing and HIPAA needs. |

| Supabase Pro (per-project pricing) | $25/mo per project baseline (per Supabase pricing) plus compute add-ons. | ~100 enterprise tenants. | Fast onboarding for enterprise tier; bridge model. |

| Self-hosted Postgres on EC2/Hetzner | Variable. ~$15 to $60/mo per VM plus your ops time. | Limited by your SRE bandwidth. | Sovereign residency, full control, you have a DBA. |

Per-tenant baseline ranges are sourced from each provider's public pricing pages as of April 2026. Compute, storage, and egress are extra. Validate at quote time.

The cost to build a SaaS in 2026 covers the full quote-to-launch breakdown. For tenancy specifically, the rule of thumb is: database-per-tenant is honest when your gross margin per customer is high enough to absorb $50 to $150/mo in dedicated infrastructure without making the unit economics ugly. For an enterprise plan at $2,000/mo MRR, that is a rounding error. For a starter plan at $29/mo MRR, it is a runway-killer.

The compliance angle is real. Neon's HIPAA write-up covers the case where database-per-tenant becomes the only model that satisfies a per-customer BAA and per-customer encryption-key isolation. If you sell into healthcare or regulated finance and a prospect demands customer-managed keys (BYOK / CMK) on top of a BAA, dedicated databases stop being optional.

HIPAA does not strictly require single-tenant architecture. Multi-tenant Postgres with RLS, AES-256 encryption at rest, TLS 1.2 plus in transit, audit logging, and a properly executed BAA is HIPAA-compliant on AWS, Azure, and GCP. What pushes healthcare SaaS to single-tenant is not the regulation. It is the customer.

Microsoft's governance and compliance guidance for multitenant solutions confirms multi-tenant is achievable across HIPAA, PCI DSS, SOC 2, and ISO 27001, but flags the controls overhead. AWS's HIPAA on EKS multi-tenancy whitepaper says the same thing. Both make the same point: the regulation lets you, but per-customer audit and BAA contracts often do not.

Three regulatory regimes flip the decision toward silo:

The pattern most SaaS converges to is the bridge model again. Pool for non-regulated and SMB tiers. Silo for the small set of customers whose contract or compliance regime demands it. We have seen this play out on every healthcare and fintech build we have shipped: the architecture starts pure pool, the first enterprise contract introduces a silo, and the silo tier ends up subsidized by an enterprise plan price that more than covers the per-tenant infrastructure cost.

Noisy neighbor is the canonical failure mode of the pool model. One tenant runs a heavy export, an N+1-laden cron job, or an unbounded report query, and every other tenant on the shared database sees latency spike. The mitigations are well-known, layerable, and required from day one of any pool-model SaaS that intends to grow.

Six mitigations, in order of cost-to-implement:

statement_timeout per role or per request. A tenant's runaway query hits the timeout, gets killed, and stops eating shared resources. We default to 30 seconds for user-facing queries and 5 minutes for explicit background jobs.tenant_id in every log, metric, and trace. You cannot fix noisy neighbor without first knowing which tenant is the noisy one. Every structured log line, every Prometheus metric label, every OpenTelemetry span attribute should carry tenant_id. Add it on day one. Adding it later is painful.tenant_id once one tenant is more than 10% of total load. Citus turns a single Postgres into a sharded cluster keyed on tenant_id. Heavy tenants get their own shard. Light tenants share a shard. This is the highest-cost, highest-payoff mitigation, and it is reversible if you keep the schema compatible.The decision threshold is load skew. If your top tenant is consuming 5% of total database load, you do not have a noisy neighbor problem yet, and any of the first four mitigations is enough. If your top tenant is consuming 30% of total load, the database is already under structural strain, and you need either Citus sharding or a bridge migration that promotes that tenant out of the pool. We have done both, depending on the cost of the migration relative to the cost of sharding.

The metric to track is not "average query latency". It is "p95 query latency segmented by tenant_id". A pool-model SaaS where most tenants see 200ms p95 and one tenant sees 4s p95 is a SaaS with one noisy neighbor and a fixable problem. A pool-model SaaS where every tenant's p95 has been creeping up for three months is a SaaS that needs sharding.

In multi-tenant SaaS, the Stripe Customer object maps 1:1 to a tenant (organization, workspace, account), not to an individual user. This is the single most consequential billing decision in your data model, and getting it wrong forces a painful migration the day you launch a team plan or an enterprise contract.

The pattern: tenant gets created, Stripe Customer gets created, Stripe Subscription belongs to the Customer, and entitlements (plan, seat count, usage limits) flow from the Subscription back into your tenant metadata via Stripe webhooks. The Stripe Customer Portal then gives each tenant a self-serve interface to manage their own billing without touching yours.

Three pricing shapes work cleanly on this model. Per-seat scales with the user count inside a tenant. Usage-based prices on tenant-scoped metrics (events ingested, rows stored, API calls, GB processed). Hybrid combines a base plan with usage overage. All three work. What does not work is treating each user as a Stripe Customer in a multi-tenant product, because the moment a tenant adds a second user, the billing model breaks.

For B2B SaaS targeting both SMB and enterprise, plan two-tier pricing into the tenant model from day one. Standard plans live in the pool. Enterprise plans trigger the bridge migration. The Stripe Subscription metadata carries the plan, and your tenant routing layer reads that metadata to decide which database connection string to hand out at request time. We have shipped this on 4 of our last 5 SaaS builds.

When an enterprise contract demands a dedicated environment for one customer, you do not re-architect the whole platform. You promote that one tenant out of the pool into a silo. This is the bridge migration in action, and it is an 8-step playbook with one checklist item per step.

The 8 steps:

tenant_id matches. Use pg_dump --schema=... if you are on schema-per-tenant, or filtered COPY queries if you are on pool-with-RLS.The cost of a per-customer promotion is [VP-APPROVED RANGE] of engineering time per migration, depending on data volume, schema complexity, and how many cross-tenant features need carve-outs. Price it into the enterprise plan as a one-time onboarding fee, or amortize it across the first year of the enterprise contract. Do not absorb it as a sunk cost on the SaaS P&L.

The trap most teams fall into is treating the migration as a one-off engineering project. The third time you do it, the playbook should be an internal runbook, not a Slack thread. We script steps 1, 2, 3, 6, and 7. Steps 4 and 5 stay manual because cutover risk is real and the on-call engineer should be awake for it.

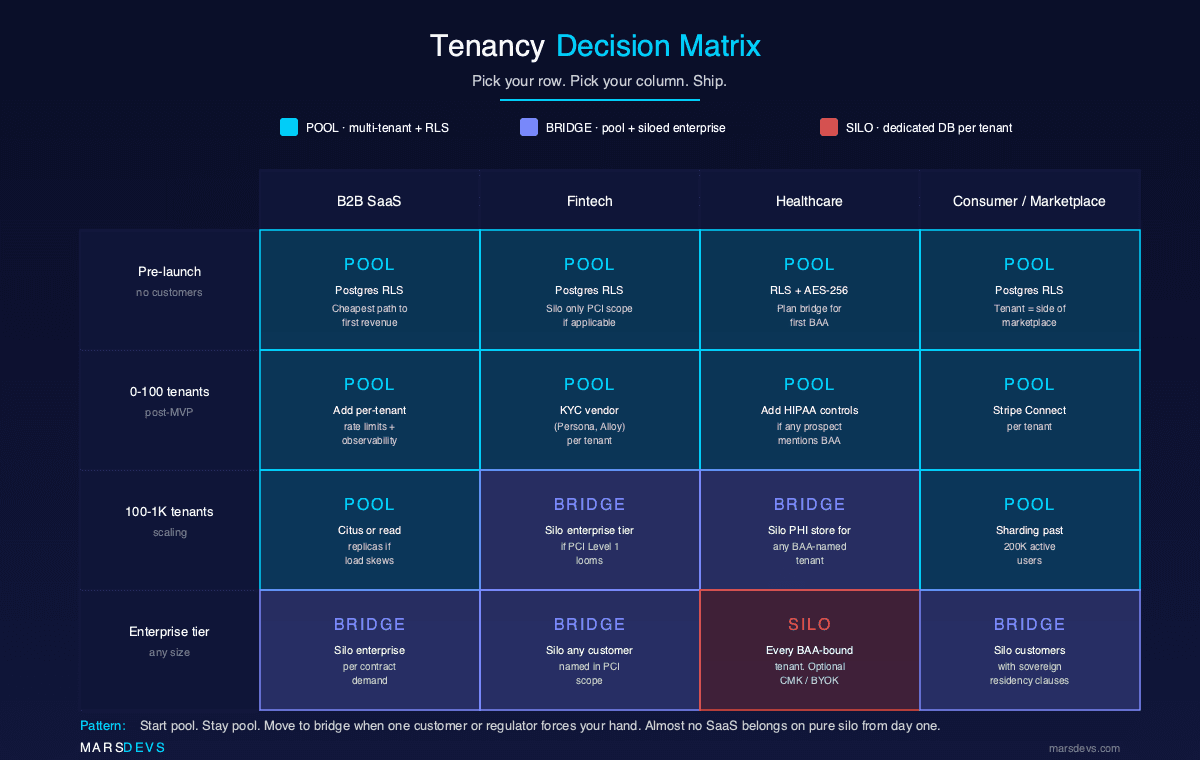

The right tenancy model depends on two axes: where your SaaS is in its lifecycle and what industry your customers come from. The decision matrix below collapses the entire 2026 decision space into 16 cells. Find your row, find your column, ship.

| Stage | B2B SaaS | Fintech | Healthcare | Marketplace |

|---|---|---|---|---|

| Pre-launch (no customers yet) | Pool with Postgres RLS. Cheapest path to first revenue. | Pool with Postgres RLS. Silo only the PCI scope if applicable. | Pool with RLS plus encryption at rest. Plan bridge for first BAA. | Pool with Postgres RLS. Tenant = side of the marketplace. |

| Post-MVP (1 to 25 customers) | Pool. Add per-tenant rate limits and observability. | Pool. KYC vendor (Persona, Alloy) per tenant. | Pool. Add HIPAA controls if any prospect mentions BAA. | Pool with Stripe Connect per tenant. |

| Scaling (25 to 200 customers) | Pool. Add Citus or read replicas if load skews. | Bridge. Silo enterprise tier if PCI Level 1 looms. | Bridge. Silo PHI store for any BAA-named tenant. | Pool. Sharding becomes valuable past 200K active users. |

| Enterprise tier (any size, enterprise contracts) | Bridge. Silo enterprise per contract demand. | Bridge. Silo any customer named in PCI scope. | Bridge. Silo every BAA-bound customer. Optional CMK. | Bridge. Silo any customer with sovereign residency clauses. |

Pool = multi-tenant pooled with Postgres RLS. Silo = single-tenant dedicated database. Bridge = pooled standard plus siloed enterprise.

The pattern across the matrix is clear. Start pool. Stay pool until a paying customer or a regulator forces your hand. Move to bridge when one tenant or one contract or one compliance regime demands isolation, not before. Almost no SaaS belongs on pure silo from day one.

For founders pre-launch, this matrix collapses to one decision: start in the top-left cell of your column. For founders at the enterprise tier, the decision is which customers belong in the silo and how to price the migration into the contract. The middle two rows (post-MVP and scaling) are where most SaaS lives, and they are the rows where pool-with-RLS plus a clean bridge migration plan does the most work.

We have shipped 5 SaaS builds since 2023 across the archetypes most founders ask us about. Four of five ran on multi-tenant Postgres with RLS. One ran on a bridge model with siloed PHI infrastructure. The pattern is consistent enough that the tenancy choice is now a 30-second decision in our discovery call, not a week of architectural debate.

Project 1: B2B analytics SaaS for a Series A marketing platform. Pooled multi-tenant on Postgres with tenant_id. RLS on every table. Auth0 for organization modeling, Stripe Billing for usage-based pricing, Vercel and Supabase for the hosting plane. Shipped in 14 weeks. If we built this in 2026, we would swap Auth0 for Supabase Auth (it integrates natively with the RLS layer) and the architecture would otherwise be identical. The tenancy choice was right.

Project 2: Fintech MVP for a YC-backed embedded-payments startup. Pooled multi-tenant on Postgres with RLS for the core product. Stripe Connect for payouts, Persona for KYC, every PII field encrypted at rest with column-level encryption. Shipped in 9 weeks. We did not silo on day one because the customer was not yet under PCI Level 1 scope. The plan we shipped also included a bridge-migration runbook for the day they cross the PCI threshold.

Project 3: Healthcare analytics SaaS for a telehealth scale-up. Bridge model from day one. Standard tenants pooled. PHI store siloed per BAA-bound customer. AWS RDS Postgres with BAA, encryption at rest with customer-managed keys via AWS KMS, full audit trail to S3 with object-lock retention. Shipped in 16 weeks. The 30% cost overrun on this project came from compliance scope creep in week 7, not from the tenancy choice. The tenancy choice was the only one that worked under HIPAA per-customer BAAs.

Project 4: Two-sided marketplace SaaS for a B2B services vertical. Pooled multi-tenant on Postgres with RLS. Stripe Connect for payouts, Supabase Realtime for in-app messaging, a tenant-scoped review system. Shipped in 11 weeks. Marketplace tenancy is the same as B2B SaaS tenancy, with the wrinkle that "tenant" maps to "side of the marketplace" (provider or requester). RLS plus careful policy design handled both sides cleanly.

Project 5: Internal-ops SaaS for a logistics scale-up's ops team. Pooled multi-tenant on Postgres with RLS. For an internal product the multi-tenancy was almost vestigial (one tenant, the operating company, plus future expansion to subsidiary teams). Shipped in 8 weeks on the back of a Retool-first scoping pass. The tenancy choice cost nothing and gave us optionality for the day the client spins out a subsidiary that needs isolated data.

The reversal we would make on Project 1 is the auth layer, not the tenancy. Auth0 was sensible in 2023; in 2026 Supabase Auth is the right pick because it integrates with the RLS layer for free. Tenancy-wise, we would keep all five choices. Pool plus RLS for B2B analytics, fintech MVP, marketplace, and internal-ops. Bridge with siloed PHI for healthcare. The full project-by-project breakdown lives at the real cost of our last 5 SaaS builds, with cost ranges, team sizes, and post-mortems per archetype.

Multi-tenant or single-tenant: which is cheaper at scale?

Multi-tenant is cheaper per tenant at scale and lowers TCO by roughly 40% versus silo because fixed infrastructure cost amortizes across every tenant. Single-tenant scales linearly with customer count. The bridge model is the realistic compromise once you have more than ~10 enterprise customers paying enough to justify dedicated infrastructure.

Can I migrate from multi-tenant to single-tenant later?

Yes, per customer, via the 8-step bridge migration playbook (snapshot, provision, restore, dual-write, cutover, verify, decommission, post-mortem). Engineering cost lands at [VP-APPROVED RANGE] per promoted tenant. Price it into the enterprise plan as a one-time fee rather than re-architecting the whole platform.

Does HIPAA require single-tenant architecture?

No. HIPAA does not mandate single-tenant. Multi-tenant Postgres with strong logical isolation (RLS, encryption at rest with AES-256, TLS 1.2+ in transit, audit trails, signed BAA) is HIPAA-compliant on AWS, Azure, and GCP. What pushes healthcare SaaS to silo is per-customer BAA contracts and procurement preferences, not the regulation itself.

Can Postgres handle 1,000 tenants in a single database?

Yes, comfortably, with Row-Level Security, indexes on tenant_id, and pgbouncer in transaction pooling mode. Schema-per-tenant works to ~100 to 500 tenants but adds N-times migration cost. Beyond ~1,000 tenants or asymmetric load, shard with Citus on tenant_id to keep query performance predictable.

What is row-level security in Postgres?

Row-Level Security (RLS) is a Postgres feature that enforces row visibility based on policies tied to the connecting role or JWT claim. With Supabase, the canonical policy is tenant_id = (auth.jwt() ->> 'tenant_id')::uuid. RLS enforces tenant isolation at the database, not the application, so a bug in your code cannot leak cross-tenant data.

What is the bridge model in SaaS tenancy?

The bridge model is a hybrid where standard-tier customers share pooled infrastructure and enterprise-tier customers get siloed environments. The AWS SaaS Lens names it "bridge". It is the default 2026 pattern for SaaS that sells to both SMB and enterprise, because it captures both segments without rebuilding from scratch.

What is "noisy neighbor" in a multi-tenant SaaS?

Noisy neighbor is the failure mode where one tenant's load (heavy queries, cron jobs, exports) degrades performance for other tenants on shared infrastructure. Mitigate with per-tenant rate limits, statement timeouts, connection pool quotas, dedicated read replicas for heavy tenants, and Citus sharding once one tenant is past 10% of total load.

How much does a multi-tenant SaaS cost to build at MarsDevs?

Per our pricing memory: a SaaS platform runs $10,000 to $50,000 for standard scope; complex or enterprise scope runs $30,000 to $200,000. Our hourly rate is $15 to $25/hr. Tenancy choice does not materially change MVP cost; it changes long-term operations cost. See the cost to build a SaaS in 2026 breakdown for the full quote-to-launch math.

Most SaaS founders we talk to spend two to four weeks debating tenancy in architectural reviews. After 80+ products shipped since 2019, our answer is faster. Pool with Postgres RLS by default. Bridge when a customer or regulator forces it. Silo only for the small fraction of contracts where the math justifies linear cost growth.

We take on 4 SaaS projects per month. If you are picking between agency quotes or stress-testing your day-one architecture, book a free 30-minute scoping call. We will map your archetype to the closest of our 5 recent builds, name the tenancy model, and give you a real cost range backed by approved pricing memory. Visit /contact or read how to build a SaaS product for the full engagement walkthrough.

For the broader architectural picture, the SaaS architecture guide for startups covers control plane, data plane, observability, and the full bridge-model setup in one piece.

Co-Founder, MarsDevs

Vishvajit started MarsDevs in 2019 to help founders turn ideas into production-grade software. With deep expertise in AI, cloud architecture, and product engineering, he has led the delivery of 80+ software products for clients in 12+ countries.

Get more comparisons like this

Join founders and CTOs who receive our engineering insights weekly. No spam, just actionable technical content.

Partner with our team to design, build, and scale your next product.

Let’s Talk