We use Claude Code daily. Here is the verified 2026 comparison: pricing, features, benchmarks, and the use-case decision matrix. The team that ran 8 sub-agents in parallel on this very article.

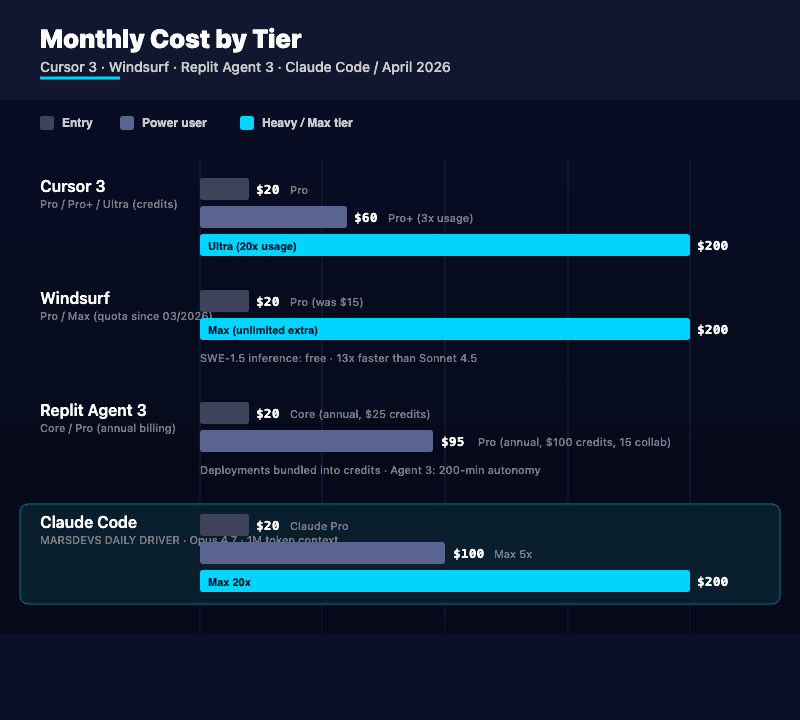

TL;DR: As of April 2026, Claude Code on Opus 4.7 with a 1M-token context wins for serious engineering work. It runs the entire MarsDevs internal SEO pipeline daily: parallel sub-agents, GSC scripts, Strapi push automation. Cursor 3 ($20/mo Pro, $60/mo Pro+, $200/mo Ultra) is still the best AI IDE for daily inline editing. Windsurf ($20/mo Pro after the March 2026 price hike) keeps the value angle alive with free SWE-1.5 inference. Replit Agent 3 ($20/mo Core, 200-minute autonomous runs) is still the fastest path from idea to deployed app for non-technical founders.

By the MarsDevs Engineering Team. Updated April 30, 2026. Based on AI coding tools we use daily across 80+ shipped products in 12 countries.

Twelve months ago the question was "GitHub Copilot or nothing?" That era is over. The AI coding tools market hit an estimated $12.8 billion in 2026, up from $5.1B in 2024, according to AI coding adoption tracking data. Stack Overflow's 2025 Developer Survey puts daily AI tool use at 51% of professional developers, with 84% using or planning to use AI in their workflow.

The 2026 numbers tell a sharper story. Claude Code at 28% and Cursor at 24% account for over half of primary-tool selections. Most developers run a three-tool stack rather than committing to one. The category itself has fractured into AI-native IDEs (Cursor, Windsurf), cloud-first builders (Replit), and terminal-based agents (Claude Code, plus newer entries like GitHub Copilot CLI and Aider).

If you are a founder picking tools, three things decide it: your team's technical depth, your project complexity, and whether you need an AI inside an editor or one that runs across your entire codebase autonomously. Pick wrong and you waste weeks. Pick right and you cut development time by 40 to 50%.

MarsDevs is a product engineering company that builds AI-powered applications, SaaS platforms, and MVPs for startup founders. We have shipped with all four tools across 80+ products. Claude Code is our daily driver in 2026. The internal automation behind this very SEO content pipeline runs on Claude Code with sub-agents. So does our Search Console reporting, our S3 image automation, and our Strapi push scripts. We are not theorising. We live in it.

This guide covers the best AI coding tools in 2026 across features, pricing, code quality, and real-world use cases so you can make a decision that saves time and money.

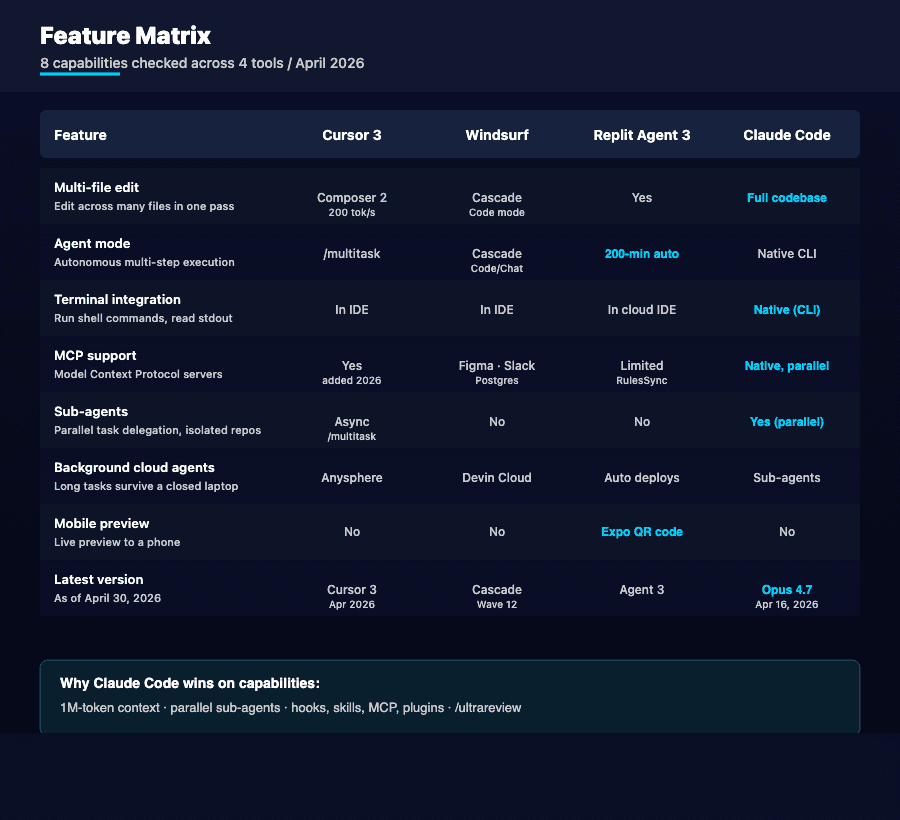

Here is the full feature comparison across all four tools before we break down each one.

| Feature | Cursor | Windsurf | Replit | Claude Code |

|---|---|---|---|---|

| Type | AI-native IDE (VS Code fork) | AI-native IDE (VS Code fork) | Cloud IDE + deployment | CLI-based agent |

| Latest version | Cursor 3 (April 2026) | Cascade Wave 12 (March 2026) | Agent 3 (late 2025, refined 2026) | Opus 4.7 in Claude Code (April 16, 2026) |

| Agent mode | Yes (async subagents, /multitask) | Yes (Cascade Code + Chat modes) | Yes (Agent 3, 200-min autonomy) | Yes (terminal-native sub-agents) |

| Multi-file editing | Yes (Composer 2, 200 tok/s) | Yes (Cascade) | Yes (Agent 3) | Yes (full codebase) |

| Inline code completion | Yes (best-in-class Tab) | Yes (unlimited Supercomplete) | Yes (basic) | No (not an IDE) |

| Context window | Varies by model | Varies by model | Varies by model | Up to 1M tokens (Opus 4.7) |

| Background cloud agents | Yes (Anysphere infra) | Yes (Devin Cloud sessions) | Yes (autonomous deploys) | Yes (sub-agents in parallel) |

| MCP support | Yes (added 2026) | Yes (Figma, Slack, Postgres) | Limited (RulesSync) | Yes (native, parallel servers) |

| Skills / Hooks | Yes (skills, hooks) | Codemaps, Memories | replit.md / RulesSync | Skills, hooks, sub-agents, plugins |

| Deployment built-in | No | Beta (App Deploys) | Yes (one-click, included in credits) | No |

| Proprietary models | No (uses third-party) | Yes (SWE-1.5, free) | Yes (built-in) | Yes (Claude Opus 4.7, Sonnet 4.6, Haiku 4.5) |

| Best for | Daily IDE workflow | Budget-conscious teams | Non-technical builders | Complex codebases + automation |

| Starting price (Pro) | $20/month | $20/month (was $15) | $20/month (Core, billed annually) | $20/month (Claude Pro) |

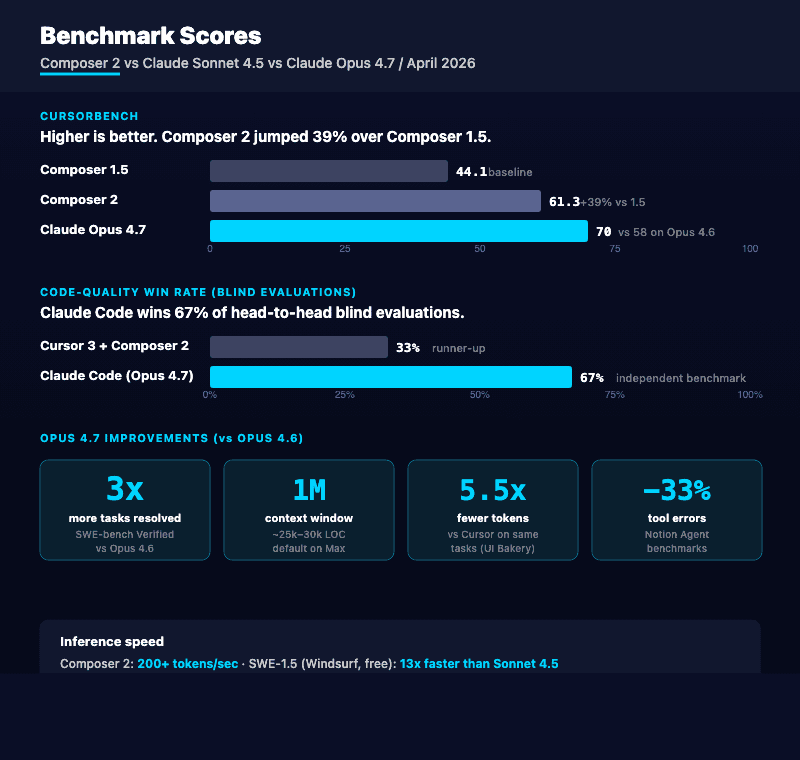

Cursor 3 shipped in April 2026 with Composer 2 as the default Auto-mode model at 200+ tokens per second and 61.3 on CursorBench, a 39% jump over Composer 1.5. Hobby is still free. Pro is $20/month. The new Pro+ tier sits at $60/month with 3x usage on premium models. Ultra runs $200/month with 20x usage. Teams stay at $40/seat. MCP, skills, and hooks are now first-class on Pro and above.

Async subagents and /multitask. Cursor's /multitask command runs async subagents in parallel, breaking a request into smaller chunks so you do not block on a single agent. It is the closest IDE-native equivalent to what Claude Code does in the terminal.

Background cloud agents that survive a closed laptop. Long refactors and codebase-wide migrations run on Anysphere's cloud infrastructure. Start a task locally, hit "run in cloud" when it is going to take 30+ minutes, walk away. Notifications fire when it finishes. Improved worktrees in the Agents Window let you run isolated tasks across branches and pull any branch into your local foreground with one click.

Best-in-class Tab completions. Cursor still predicts the next 5 to 10 lines based on your project context. Across all AI coding tools, developers consistently rate this as the single most useful daily feature.

Composer 2 for multi-file changes. Need to change an API response format that touches 15 components? Composer 2 shows diffs across every affected file before you accept anything. You review once, apply everywhere. The 200 tok/s speed makes the review loop feel instant.

Model flexibility. Cursor lets you switch between GPT-5, Claude Opus 4.7, Gemini 3.1 Pro, and other models per task. Quick inline fix? Use a fast model. Architecture decision? Route to Opus 4.7 or GPT-5. That flexibility matters when you are balancing speed against cost.

The credit-based bill spikes. Cursor switched to credit-based billing in June 2025 and that has not changed. Your $20/month Pro plan includes $20 in credits, which heavy agent mode usage drains in days, not weeks. Some teams report daily overages of $10 to $20 when running premium models hard. Pro+ at $60/month softens this, but you are now paying triple the entry price.

No deployment pipeline. Cursor is an editor, full stop. You handle CI/CD, hosting, and deployment elsewhere. For a founder who wants to go from code to live app without managing infrastructure, that is friction.

Async subagents have a learning curve. Tab works out of the box. Getting maximum value from /multitask, async worktrees, and background cloud agents takes a week or two of figuring out which prompt patterns route to which agent.

Windsurf is an AI-native IDE built by Codeium (now branded Windsurf) as a VS Code fork, featuring the Cascade agentic engine and the proprietary SWE-1.5 model. In March 2026, Windsurf scrapped its old credit-based system for quota-based billing and raised Pro from $15 to $20/month. It still positions as the affordable Cursor alternative with one unique advantage: proprietary AI models that cost zero credits to run.

Cascade for deep debugging. Cascade now runs in two modes: Code (multi-file changes, terminal commands, auto-fix loops) and Chat (codebase questions, design-level discussion). Cascade also remembers architectural decisions across sessions. Tell it "we use repository pattern with dependency injection" and it stops suggesting business logic in controllers a week later.

Free proprietary models, still. SWE-1.5 burns zero credits. SWE-1, SWE-1-mini, and swe-grep also run free. SWE-1.5 is roughly 13x faster than Claude Sonnet 4.5 for inference. Routine tasks use the free model. You only spend quota on premium models like Claude Opus 4.7 or GPT-5 when you actually need them.

Codemaps for visual code navigation. AI-annotated visual maps of your codebase are unique to Windsurf. No competitor has shipped an equivalent yet. For onboarding to a new repo or explaining architecture to a non-technical co-founder, Codemaps is genuinely useful.

Native MCP integrations. Windsurf connects directly to Figma, Slack, and PostgreSQL through MCP. For frontend teams that live in Figma or backend teams that query Postgres dozens of times a day, that integration removes a context switch.

Lower entry price still beats Cursor on heavy use. At $20/month Pro after the price hike, Windsurf matches Cursor on entry. But the free SWE-1.5 inference means most solo developers stay under $20 to $30/month total. Cursor's credit drain on premium models pushes the same usage to $40 to $60.

Smaller ecosystem. Cursor has a larger community, more tutorials, more third-party extensions. When you hit an edge case, finding help for Windsurf-specific issues takes longer.

The $5/month price hike erased the budget pitch. Pro went from $15 to $20 in March 2026. The "cheaper than Cursor" message no longer holds at the entry tier. The real value now sits at the Max tier ($200/month, unlimited extra usage at API price), where heavy users get a flatter cost curve than Cursor Ultra.

Less polished multi-file review. Cascade handles multi-file changes well. Cursor's Composer 2 still wins the visual diff comparison with clearer syntax highlighting and a faster apply-and-revert loop.

Replit is a cloud-first development platform with an AI Agent that builds, deploys, and hosts full-stack applications from natural language prompts. Replit Agent 3, refined through 2026, runs autonomous trajectories up to 200 minutes per session (up from 20 minutes in Agent 2 and 2 minutes in Agent 1). Replit overhauled pricing in January 2026: Core is $20/month billed annually with $25 in monthly credits, Pro is $95/month annually with $100 in credits and up to 15 collaborators. Deployments are now bundled into credits rather than billed separately.

Zero-to-deployed in minutes. Type "build me a SaaS dashboard with user auth and Stripe billing" into Replit Agent 3, and it spins up the environment, installs dependencies, writes code, deploys to a live URL, and can preview to a phone via Expo QR code if you asked for mobile. No terminal. No Git. No DevOps. For rapid prototyping and vibe coding, nothing else comes close.

200-minute autonomous runs. Agent 3 handles complex, hours-long trajectories with minimal human oversight. The self-healing is real: when a build breaks, Agent 3 reads the error, patches, retests, and continues. For founders who want to step away and come back to a working app, this is the only tool in this comparison built for that.

Real-time multiplayer collaboration. Multiple people edit the same project at the same time, Google Docs style. For a founder and developer pair iterating on a prototype together, this removes the Git merge workflow entirely.

Native integration UI. Connecting to Notion, Dropbox, Stripe? Agent 3 surfaces a UI for secure authentication. No more copy-pasting API keys into a .env. RulesSync via replit.md keeps Agent configuration consistent across projects.

Bundled deployment, no surprise bills. The January 2026 pricing overhaul folded autoscale, scheduled, and reserved-VM deployment costs into the monthly credit allowance. One bill. Predictable.

Code quality ceiling. Agent 3 produces working code fast, but the architecture tends toward "whatever gets it running." For production systems that need scale, security, and maintainability, Replit-generated code usually needs heavy refactoring. We see this firsthand at MarsDevs: founders come to us with a Replit prototype that demos beautifully but breaks under real load.

Limited model access. You cannot switch to Claude Opus 4.7 or GPT-5 for a harder task the way you can in Cursor or Windsurf. You use what Replit provides.

Costs add up for ambitious projects. Core's $25 in credits covers light use. Real projects with daily Agent 3 usage push into Pro at $100/month. For a single founder building a single project, that is fine. For a 5-person team, a $40/seat Cursor Teams plan plus self-hosted deployment is cheaper.

Vendor lock-in risk. Replit's environment is proprietary. Migrating to your own infrastructure means extracting code and rebuilding the deployment pipeline from scratch. For founders building an MVP they plan to scale, this is a real concern.

Claude Code is Anthropic's terminal-based AI coding agent. It runs in your terminal, reads your entire codebase, and executes multi-step tasks autonomously: reading files, writing code, running commands, committing, opening pull requests. Opus 4.7 landed in Claude Code on April 16, 2026 with the 1M-token context window enabled by default on Max, Team, and Enterprise. Anthropic reports 3x more tasks resolved on SWE-bench Verified versus Opus 4.6, 70% on CursorBench (versus 58%), and a third fewer tool errors on Notion Agent benchmarks.

Largest context window in the industry. Opus 4.7 processes up to 1 million tokens in a single context. That is roughly 25,000 to 30,000 lines of code. Where other tools chunk your codebase and lose context between files, Claude Code holds your entire project in working memory. For large codebases, this is the difference between an AI that understands your architecture and one that guesses.

Sub-agents, skills, hooks, MCP, plugins. Claude Code's three extensibility primitives are sub-agents (parallel task delegation, isolated working copies of the repo), skills (reusable prompt templates that surface as slash commands), and hooks (lifecycle automation on tool use). Each sub-agent works on an isolated copy of the repo, so multiple sub-agents can edit files in parallel without conflicts. MCP servers now connect in parallel during startup. Plugins ship via dependency-managed /plugin interface.

/ultrareview for deep code review. Run a full multi-agent code review in the cloud on a branch or GitHub PR. One command. Multiple critique angles. Auto mode is now available for Max subscribers on Opus 4.7, which means hands-off execution with safety controls.

Highest code quality scores. In blind testing, Claude Code wins 67% of quality evaluations for correctness, completeness, and structure. Independent benchmarks show its outputs need significantly less manual revision than competing tools. Our engineers see the same pattern: Claude Code's first-pass output needs fewer fixes than anything else we run.

Token efficiency. Claude Code uses 5.5x fewer tokens than Cursor for identical tasks, per UI Bakery's benchmark. Fewer tokens means lower cost per task and higher accuracy per dollar. One independent test measured 8.5 accuracy points per dollar for Claude Code versus 6.2 for Cursor.

Full workflow automation. Claude Code reads GitHub issues, creates branches, writes implementations, runs your test suite, fixes failures, and opens pull requests. An engineer describes a feature, walks away, comes back to a ready-to-review PR. For teams doing AI-first MVP development, this autonomous capability shaves days off each feature.

This is not a vendor pitch. Claude Code runs the entire MarsDevs internal SEO automation stack. Here is what we built and ship with it daily as of April 2026.

Parallel sub-agent content writing pipeline. This very article was rewritten by a content_writer sub-agent invoked from a parent Claude Code session. We have eight sub-agents wired up: site_auditor, keyword_researcher, content_writer, content_humanizer, aeo_optimizer, visual_creator, page_formatter, post_publish_auditor. The parent agent dispatches them in parallel where dependencies allow. Wall clock for a full article cycle dropped from 6 hours to under 90 minutes.

Search Console reporting and analysis. We wrote gsc-analysis-2026-04-23.py and gsc-inspect-canonical.py as Python scripts that Claude Code drafted, debugged, and committed. They pull GSC data, segment by URL, flag canonical mismatches, and surface coverage issues. Human time on this was specifying intent and reviewing output. Claude Code wrote the OAuth flow, the API calls, the pandas transformations.

S3 image and SVG automation. upload-covers.py, bust-image-cache.py, normalize-svgs.py, svgs-to-png.sh, swap-svg-to-png.py. Five scripts that handle the cover-image and inline-SVG pipeline for every published article. Claude Code wrote them all, reads them daily, modifies them when image specs change.

Strapi push pipelines. push-phase1-to-strapi.py and push-week2-to-strapi.py push markdown drafts into our Strapi CMS with frontmatter mapped to fields, internal links rewritten, and images attached. attach-week2-covers.py and publish-week2-backdated.py handle the publish-time work. All Claude Code authored.

Internal link and metadata fixes. fix-internal-links.py, fix-publish-dates.py, set-tech-stack.py. Claude Code reads the entire content directory, finds broken cluster links, fixes them in place, regenerates schema. Sub-agents run audits in parallel: one checks publish dates, one checks canonical URLs, one checks tech stack tags.

We did not build any of this with Cursor or Windsurf. We tried both. The 1M context window plus sub-agents plus hooks made Claude Code the only tool that could hold the whole pipeline in head and modify across boundaries without losing the thread.

No inline completions. Claude Code does not offer Tab-style code suggestions. No ghost text, no next-line predictions. If you want inline help while typing character by character, you need a separate tool. Most of our engineers run Cursor or Windsurf for that and Claude Code in a side terminal for the heavy work.

Terminal-first learning curve. Developers comfortable in the terminal pick up Claude Code in minutes. For less terminal-savvy users, the lack of a visual editor feels disorienting. The IDE extensions for VS Code, Cursor, Windsurf, and JetBrains help, but the core experience stays CLI-based.

No deployment features. Like Cursor, Claude Code focuses on code, not infrastructure. You handle deployment, hosting, and CI/CD separately.

Tied to Claude models. You cannot switch to GPT-5 or Gemini 3.1 Pro inside Claude Code. It uses Claude Opus 4.7, Sonnet 4.6, or Haiku 4.5 exclusively. For most tasks, Claude's models are competitive or better. Teams that need specific capabilities from other providers will need a different tool for those.

Three tools worth naming if you are evaluating the full field in April 2026.

GitHub Copilot CLI brought the Copilot coding agent to the terminal, with a model picker, self-review, built-in security scanning (code scanning, secret scanning, dependency vulnerability checks), and CLI handoff. It runs on Pro, Pro+, Business, and Enterprise plans. For teams already deep in GitHub's ecosystem, the integration is tighter than anything in this comparison. The trade-off is fewer extensibility primitives versus Claude Code's sub-agents and skills.

Aider is the open-source pair-programming CLI. It is free, model-agnostic, and supports 75+ LLM providers. You pay LLM providers directly. Aider's git-first philosophy turns every AI edit into a descriptive commit. It supports 100+ programming languages, automatically lints and tests, and works with Claude 3.7 Sonnet, DeepSeek R1, GPT-4o, or local models. For developers who want to run a fully self-hosted setup or use Aider against a local model, this is the choice.

OpenCode is the other major open-source CLI gaining ground in 2026, often compared to Aider for self-hosted teams.

We have shipped with Aider on a few client projects where the customer required self-hosted models for compliance. For everything else, Claude Code stays the daily driver.

Cost is one of the first questions founders ask. Here is a direct pricing comparison verified against vendor pages on April 30, 2026.

| Plan | Cursor | Windsurf | Replit | Claude Code |

|---|---|---|---|---|

| Free tier | Hobby (limited Tab + Agent) | Free (basic features) | Starter (1 app, daily credits) | Not available separately |

| Individual entry | $20/month (Pro) | $20/month (Pro, was $15) | $20/month (Core, billed annually) | $20/month (Claude Pro) |

| Power user | $60/month (Pro+, 3x usage) | Light (unlimited allowance, contact sales) | $95/month (Pro, billed annually) | $100/month (Max 5x) |

| Heavy usage | $200/month (Ultra, 20x usage) | $200/month (Max, unlimited extra) | Enterprise (custom) | $200/month (Max 20x) |

| Teams | $40/seat/month | $40/seat/month | Enterprise (custom) | $20/seat/month (Standard) + $100/seat (Premium with Claude Code) |

| Billing model | Credits (deplete by model) | Quota-based (since March 2026) | Bundled credits + deployments | Usage window (5-hour rolling) |

Sticker price tells half the story. Here is what actually hits your monthly bill:

For startup teams comparing AI coding tools against hiring senior developers, these monthly costs are a fraction of one engineer's salary. The tools do not replace engineers. They multiply engineer output by 2 to 3x. If you are watching your runway and need to show investors traction before your next round, that multiplier matters more than the $20 to $200/month difference between these tools.

We have used all four tools across dozens of client projects. Here is how we match tools to use cases based on what has actually worked.

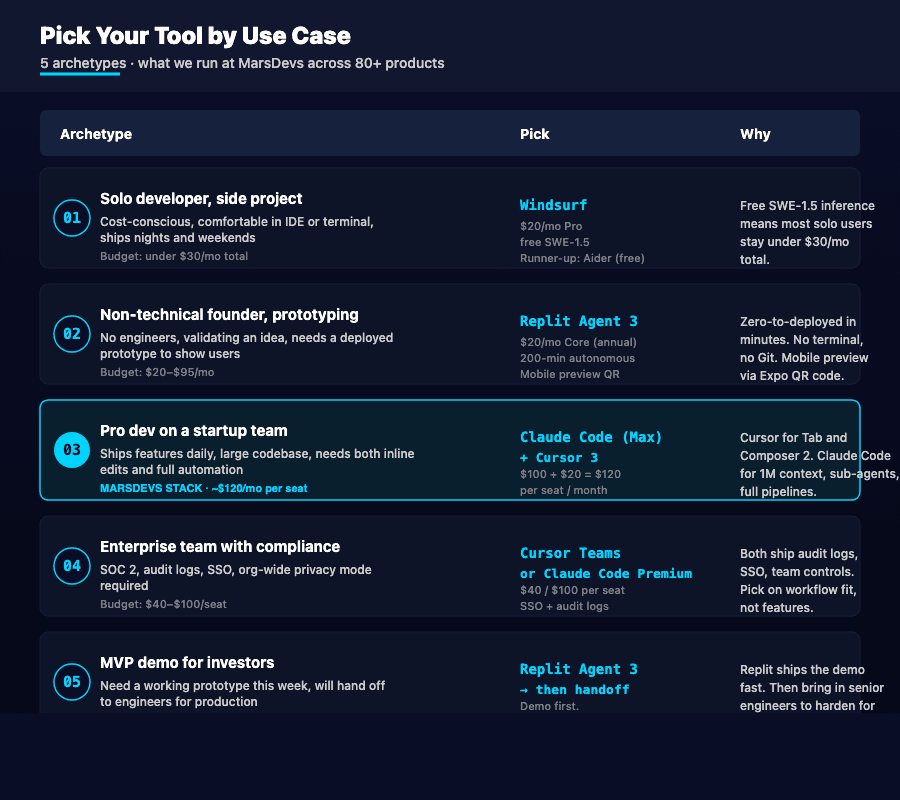

Pick Windsurf or Aider. Windsurf at $20/month with free SWE-1.5 inference still wins on price-to-value if you want an IDE. If you are happy in the terminal and want to control costs by paying LLM providers directly, Aider is free and model-agnostic.

Pick Replit Agent 3. No setup, no terminal, no Git knowledge. Describe your app in plain English, get a deployed prototype in hours, preview to your phone via QR code. Just know the code will need professional refactoring before scaling to production.

Pick Claude Code (Max) + Cursor 3. This is what we run at MarsDevs. Cursor for daily inline editing, Tab completions, and quick iterations. Claude Code for complex refactoring, full-pipeline automation, and multi-file work that needs the 1M context window. Combined cost: about $120/month per seat. The compounding productivity beats any single-tool stack we have measured.

Pick Cursor (Teams) or Claude Code (Team Premium). Both offer team management, audit logs, and SSO. Cursor Teams at $40/seat with org-wide privacy mode. Claude Code at $100/seat (Premium) for full Claude Code access plus team controls. Windsurf's Teams at $40/seat is also competitive.

Pick Replit Agent 3 for the demo, then hand off to professionals. Replit gets you a working prototype fast, perfect for investor conversations. When you need production architecture, security, and scale, bring in a team that builds AI MVPs for a living. Want to ship your MVP before runway runs out? Book a free strategy call and we will assess your codebase in 48 hours.

Here is the thing: the best AI coding tool is the one that matches your workflow, not the one with the longest feature list. We have shipped 80+ products across 12 countries. Our engineers pick different tools for different tasks every day.

| Use Case | Best Tool | Runner-Up | Why |

|---|---|---|---|

| Daily coding workflow | Cursor 3 | Windsurf | Best Tab completions, async subagents, Composer 2 |

| Budget-conscious development | Aider (free) | Windsurf | Pay LLM providers directly or get free SWE-1.5 |

| Non-technical prototyping | Replit Agent 3 | None close | Only tool with built-in deployment + 200-min autonomy |

| Complex refactoring | Claude Code | Cursor (Composer 2) | 1M token context plus sub-agents in parallel |

| Code review and debugging | Claude Code (/ultrareview) | Windsurf (Cascade) | Multi-agent analysis on whole branches |

| Multi-file feature work | Cursor 3 (Composer 2) | Claude Code | 200 tok/s visual diff review |

| Team collaboration | Replit | Cursor (Teams) | Real-time multiplayer editing |

| Self-hosted / open-source | Aider | OpenCode | Free, model-agnostic, 75+ LLM providers |

Stack Overflow's 2025 Developer Survey found that 46% of developers actively distrust the accuracy of AI tools, while only 33% trust it. Just 3% report "highly trusting" the output. The McKinsey research that fed earlier 2025 reporting also found AI coding tools cut routine coding time by 46%. That is significant. But 75% of developers still manually review every AI-generated snippet before merging.

These tools speed up development. They do not replace architectural decisions, security audits, performance optimization, or the experience to know which corners are safe to cut and which are not. A vibe-coded MVP can impress investors in a demo. Scaling that MVP to handle 10,000 concurrent users, process payments securely, and pass a SOC 2 audit takes senior engineers who understand what the AI built and why certain choices break at scale.

MarsDevs provides senior engineering teams for founders who need to ship fast without compromising quality. We use these AI tools daily to move faster. The tools work for us, not the other way around. If you have a prototype built with Replit or Cursor and need production-grade engineering to take it to market, book a free strategy call. We can assess your codebase in 48 hours.

For technical founders, Claude Code on Opus 4.7 ($100/month Max) plus Cursor 3 ($20/month Pro) is the best stack. For non-technical founders validating an idea without engineers, Replit Agent 3 ($20/month Core) gets you to a deployed prototype faster than any other option. The right choice depends on whether you have engineers on your team or need the tool to fill that role temporarily.

No. AI coding tools handle 40 to 60% of routine coding tasks, but they cannot replace architectural planning, security design, or production operations. The 2025 Stack Overflow Developer Survey found 46% of developers distrust AI tool accuracy and only 3% highly trust the output. These tools multiply your team's output by 2 to 3x. They do not eliminate the need for a team.

Claude Code on Opus 4.7 produces the highest-quality code in blind evaluations, winning 67% of quality comparisons for correctness and completeness. Anthropic reports 3x more tasks resolved on SWE-bench Verified versus Opus 4.6, plus 70% on CursorBench. Its 1-million-token context window means it understands your full codebase architecture. Cursor 3 with Composer 2 ranks second.

Individual plans range from free (Aider, Windsurf Free, Cursor Hobby) to $200/month for heavy usage. Cursor Pro is $20/month, Pro+ $60, Ultra $200. Windsurf Pro is $20/month (raised from $15 in March 2026), Max $200. Replit Core is $20/month billed annually, Pro $95. Claude Code requires Claude Pro ($20/month) or Max ($100 to $200/month). Most professional developers spend $40 to $200/month across one or two tools.

For rapid prototyping by a non-technical founder, Replit Agent 3 with its 200-minute autonomous runs and bundled deployment. For production-quality MVPs built by an engineering team, Claude Code on Opus 4.7 paired with Cursor 3. Replit gets you deployed in hours but the MVP development process for something investors will fund needs architectural thinking that Claude Code and Cursor support better than Replit's fully automated approach.

For most use cases in 2026, yes. Cursor 3's Composer 2 (200 tok/s, 61.3 CursorBench), async subagents via /multitask, and background cloud agents go far beyond Copilot's inline suggestions. GitHub Copilot CLI, launched in 2026, narrowed the gap with terminal agent capabilities and built-in security scanning. The LLM models powering these tools also matter: Cursor gives you model choice across Claude Opus 4.7, GPT-5, and Gemini 3.1 Pro. Copilot is tied to a smaller picker.

Claude Code and Cursor 3 solve different problems. Cursor 3 is an IDE with AI features: inline completions, visual diffs, Composer 2 for multi-file edits, async subagents for parallel work. Claude Code is an AI agent with code access that operates autonomously in your terminal, processing up to 1 million tokens per context with Opus 4.7. Claude Code excels at large refactors, automated workflows, and full-pipeline automation. Cursor 3 excels at daily coding flow and visual review. Many developers run both at a combined cost of around $120/month, reaching for Claude Code when Cursor's context window falls short.

Agent mode is an AI coding feature where the AI autonomously plans and executes multi-step development tasks without manual intervention. The AI reads files, writes code, runs commands, handles errors, and iterates until done. Cursor 3 supports async subagents via /multitask, Windsurf uses Cascade with Code and Chat modes, Replit Agent 3 runs autonomously up to 200 minutes per session, and Claude Code is entirely agent-based with sub-agents, hooks, skills, and MCP plugins.

Three to track. GitHub Copilot CLI brought Copilot's coding agent to the terminal with built-in security scanning. Aider is the leading open-source pair-programming CLI, free and model-agnostic with 75+ LLM provider support. OpenCode is gaining ground for self-hosted setups. None has displaced the four tools in this comparison yet, but Copilot CLI's GitHub-native integration and Aider's open-source flexibility make them worth evaluating for specific use cases.

The AI coding tool you choose matters less than what you build with it. Every month spent evaluating tools is a month your competitors spend shipping features.

Here is a 30-second decision. If you are technical and want the best daily IDE, start with Cursor 3. If budget matters and you want an IDE, Windsurf. If you are happy in the terminal and want open-source, Aider. If you have no developers and need a prototype yesterday, Replit Agent 3. If you need deep codebase understanding, sub-agents, and autonomous execution, Claude Code on Opus 4.7. If you can run two, run Cursor 3 and Claude Code together.

If you have built a prototype with any of these tools and need senior engineers to turn it into a production-ready product, that is exactly what we do. Founded in 2019, MarsDevs has shipped 80+ products across 12 countries for startups and scale-ups. Our internal SEO automation runs on Claude Code with sub-agents. Your project will too. We start building in 48 hours, and your team keeps 100% code ownership from day one. Book a free strategy call to get your project assessed.

Co-Founder, MarsDevs

Vishvajit started MarsDevs in 2019 to help founders turn ideas into production-grade software. With deep expertise in AI, cloud architecture, and product engineering, he has led the delivery of 80+ software products for clients in 12+ countries.

Get more comparisons like this

Join founders and CTOs who receive our engineering insights weekly. No spam, just actionable technical content.

Partner with our team to design, build, and scale your next product.

Let’s Talk