By Vishvajit Pathak, Co-Founder, MarsDevs. Published April 19, 2026.

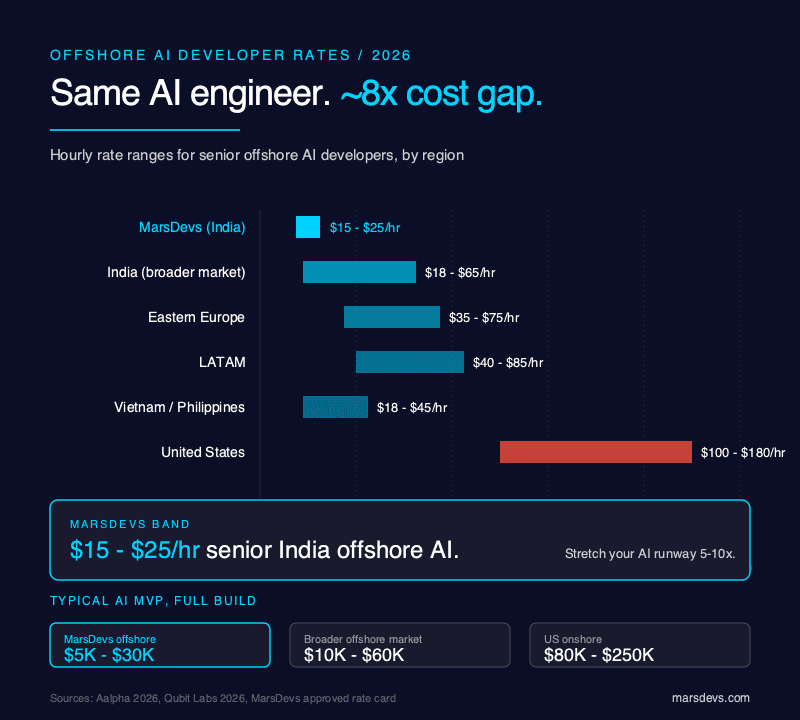

TL;DR: Hiring offshore AI developers in 2026 costs $15–$25/hr through a senior India-based partner like MarsDevs, $18–$65/hr across the broader offshore market, and $100–$180/hr in the US. A vetted AI MVP lands in 3–12 weeks at $5,000–$30,000. We have shipped 80+ products across 12 countries since 2019 with an India-based team. The pattern that separates real AI engineers from GPT-wrappers: framework-fluency in LangChain, LangGraph, and vLLM, paired with a paid 2–4 week trial. Full cost table, vetting rubric, IP clauses, and red flags below.

Offshore AI hiring broke in 2025 for one reason. Every generalist shop rewrote its LinkedIn bio to include "AI" after GPT-4o shipped, and founders could not tell a real LangGraph engineer from a Flask developer calling the OpenAI API. Per a 2026 groovyweb industry survey, 80% of CTOs picked the wrong offshore vendor the first time. Average damage: $47,000 and five months. 2026 looks different because the market has re-sorted around evidence: shipped systems, eval sets, observability tooling, and named framework fluency.

You just closed your seed round. Your investors want an AI-powered feature live in 90 days. Hiring a full US AI team will eat half your runway before you write a line of production code. Offshore is the obvious play. The non-obvious part: "offshore AI developer" now means at least five different roles, with five different rates. Pick the wrong one and it costs more than the cheapest vendor you rejected.

This playbook is the one we wish existed when we started shipping AI builds in 2023. It covers 2026 cost bands, geo tradeoffs, the engagement-model decision matrix, a 7-stage vetting process with real interview questions, the IP clauses most contracts miss, and an honest section on when you should not hire offshore at all. Every number in the cost tables is approved. Every framework we name is one we have shipped to production.

For a broader view that is not geo-specific, read how to hire AI developers in general after you finish here.

An offshore AI developer in 2026 is a specialist who builds production AI systems. That work splits into five distinct role types: LLM/RAG engineer, AI agent engineer, ML engineer, data scientist, and AI-adjacent full-stack developer. Confusing them is the single most expensive mistake founders make. You do not hire a data scientist to ship a RAG chatbot, and you do not hire an ML engineer to fine-tune GPT responses.

Here is how the roles map to the work you are probably trying to get done.

| Role | Primary work | Framework stack | When you need one |

|---|---|---|---|

| LLM / RAG engineer | Retrieval-augmented generation, prompt engineering, vector DB tuning, eval sets | LangChain, LlamaIndex, Pinecone, Weaviate, Chroma, Qdrant | 80% of 2026 offshore AI work. Chatbots, search, internal copilots. |

| AI agent engineer | Multi-step agents, tool use, planners, workflow orchestration | LangGraph, CrewAI, AutoGen, Semantic Kernel | Autonomous task runners, research agents, agentic workflows. |

| ML engineer | Training, fine-tuning, model serving, MLOps | PyTorch, TensorFlow, JAX, vLLM, Ollama, Hugging Face Transformers | Custom model training, fine-tuning, on-prem inference. |

| Data scientist | Data modeling, feature engineering, statistical analysis | scikit-learn, pandas, Jupyter, SQL, dbt | Predictive analytics, forecasting, BI-flavored AI. |

| AI-adjacent full-stack | Ships AI features inside a SaaS. Calls OpenAI/Anthropic APIs, handles streaming, caching | Next.js, FastAPI, Vercel AI SDK, OpenAI API, Anthropic API | Adding AI to an existing product without deep model work. |

MarsDevs lived-experience note: of the AI builds we have shipped since 2023, roughly 70% were LLM/RAG work, 15% were agent work, 10% were AI-adjacent full-stack features, and only 5% touched ML-engineer-level model training. If you do not know which bucket your project falls into, assume RAG or AI-adjacent full-stack. Those are the highest-value hires for most founders.

A quick disambiguation to save your budget. A "Python developer with 2 years of AI experience" posted on a marketplace in April 2026 is usually an AI-adjacent full-stack developer who has called the OpenAI API twice. That is fine if that is what you need. It is disastrous if you are building a production RAG system and do not find out until sprint 3.

For cost discipline once you know the role, cross-reference the AI development cost breakdown for 2026.

Offshore AI developer rates in 2026 run $15–$25/hr through a senior India-based product partner like MarsDevs, $18–$65/hr across the broader India and South Asia offshore market, $35–$75/hr in Eastern Europe, $40–$85/hr in LATAM, and $100–$180/hr in the US. For a full AI MVP, that translates to $5,000–$30,000 offshore vs $80,000–$250,000 onshore. We have shipped every AI MVP since 2023 at MarsDevs inside the $5,000–$30,000 band, and timelines have landed in 3–12 weeks.

Here is the cost table we give founders on the first call.

| AI workload | MarsDevs offshore (India) | Broader offshore market | US onshore | Typical timeline |

|---|---|---|---|---|

| AI MVP | $5,000–$30,000 | $10,000–$60,000 | $80,000–$250,000 | 3–12 weeks |

| Simple AI Agent | $3,000–$15,000 | $6,000–$30,000 | $40,000–$150,000 | 2–10 weeks |

| Multi-agent system | $5,000–$30,000 | $12,000–$60,000 | $100,000–$350,000 | 4–14 weeks |

| RAG system | $8,000–$50,000 | $15,000–$90,000 | $120,000–$400,000 | 3–16 weeks |

| AI Chatbot | $5,000–$40,000 | $10,000–$75,000 | $80,000–$300,000 | 3–12 weeks |

| Full enterprise AI | $50,000–$300,000 | $100,000–$500,000 | $500,000–$2M+ | 4–9 months |

Rate ranges outside the MarsDevs column use published 2026 offshore data from aalpha.net and qubit-labs.com. Our own $15-$25/hr and workload ranges have been stable across 2024-2026.

The headline hourly rate is not the total cost. Four line items regularly turn a $20K quote into a $35K invoice.

MarsDevs lived-experience note: we have seen founders accept a $12/hr quote from a marketplace contractor, then pay another $8K in project management, QA rework, and model-cost overruns across a 12-week build. Net rate ends up at $22–$28/hr with worse outcomes than our direct quote. The cheapest line item is rarely the cheapest delivery.

For deeper cost breakdowns on agentic work, see the AI agent development cost breakdown.

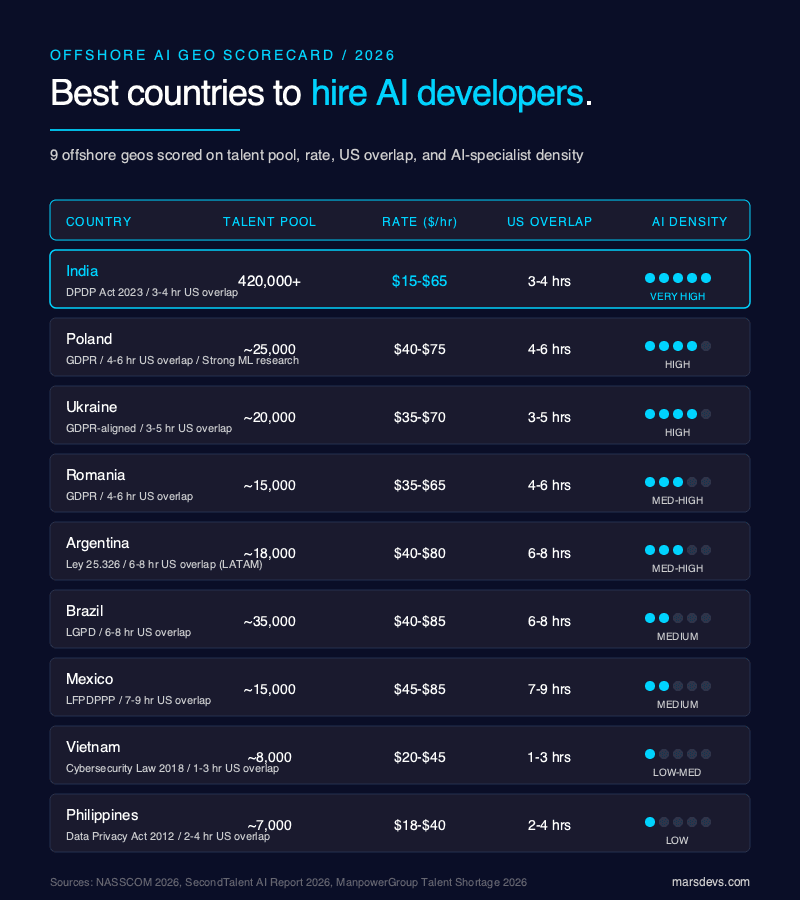

The best country to hire offshore AI developers in 2026 depends on one variable: how many productive time-zone hours you need with the team. India wins on talent pool, cost, and AI-specialist density. Poland and Ukraine win on ML research pedigree. Argentina, Brazil, Mexico, and Colombia win on US time-zone overlap. Vietnam, the Philippines, and Egypt win on cost but lag on AI-specific depth.

Here is the 2026 geo scorecard we use internally to tell founders where to look.

| Country | AI talent pool | Avg rate ($/hr) | US overlap (hrs) | EU overlap (hrs) | IP jurisdiction | AI-specialist density |

|---|---|---|---|---|---|---|

| India | 420,000+ AI/DS pros | $15–$65 | 3–4 | 4–6 | DPDP Act 2023 | Very high |

| Poland | ~25,000 | $40–$75 | 4–6 | Full | GDPR | High (strong ML) |

| Ukraine | ~20,000 (pre-war estimate) | $35–$70 | 3–5 | Full | GDPR-aligned | High |

| Romania | ~15,000 | $35–$65 | 4–6 | Full | GDPR | Medium-high |

| Argentina | ~18,000 | $40–$80 | 6–8 | 2–4 | Ley 25.326 | Medium-high |

| Brazil | ~35,000 | $40–$85 | 6–8 | 2–4 | LGPD | Medium |

| Mexico | ~15,000 | $45–$85 | 7–9 | 1–3 | LFPDPPP | Medium |

| Colombia | ~10,000 | $35–$70 | 7–9 | 1–3 | Law 1581 | Medium |

| Vietnam | ~8,000 | $20–$45 | 1–3 | 3–5 | Cybersecurity Law 2018 | Low-medium |

| Philippines | ~7,000 | $18–$40 | 2–4 | 3–5 | Data Privacy Act 2012 | Low |

Sources for talent pool sizing: NASSCOM and secondtalent.com 2026 AI talent report. India pool is roughly 15x the next-largest offshore geo. The ManpowerGroup 2026 Talent Shortage Survey flagged AI Model & Application Development as the single hardest role to fill worldwide at 39%, with 82% of Indian employers reporting shortage vs 72% globally (reported by Deccan Herald and cxotoday.com).

Founders ask about US-India overlap, hear "only 3–4 productive hours," and panic. We have run India-based teams for US-based founders since 2019 across 12 countries. The honest read: 3–4 hours of real overlap is enough for daily standups, live reviews, and unblocking. The remaining 12 hours become async execution time. That is a feature, not a bug. Code gets reviewed overnight. Tickets move faster. Release cadence speeds up.

LATAM's 6–8 hour US overlap matters for two cases: pair-programming-heavy teams, and regulated industries where synchronous legal sign-off is non-negotiable. For everything else, the India async pattern ships faster.

For deeper geo tradeoffs, offshore, nearshore, and onshore each have tradeoffs we break down separately.

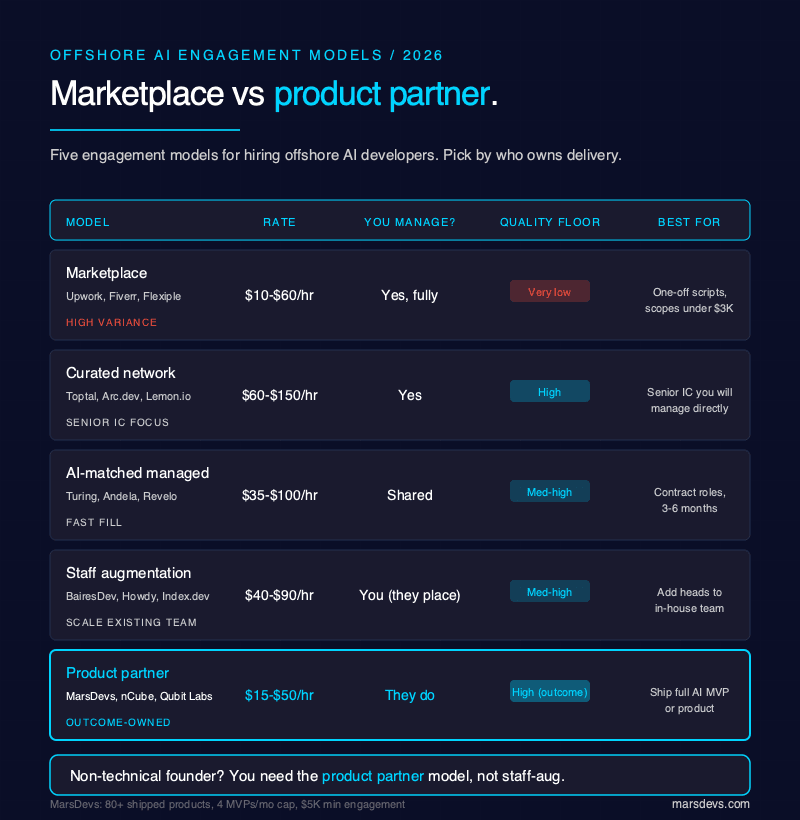

Five engagement models dominate offshore AI hiring in 2026: marketplaces (Upwork, Fiverr), curated networks (Toptal, Arc.dev, Lemon.io), AI-matched managed networks (Turing, Andela, Revelo), staff-augmentation firms (BairesDev, Howdy, Index.dev), and product partners (MarsDevs, nCube, Qubit Labs). Each has a distinct cost structure, quality floor, and failure mode. Picking the wrong one is the second-most expensive mistake after picking the wrong role.

Here is the decision matrix we walk founders through.

| Model | Example platforms | Typical rate | Who manages delivery | Quality floor | Best for |

|---|---|---|---|---|---|

| Marketplace | Upwork, Fiverr, Flexiple | $10–$60/hr | You do | Very low | One-off scripts, small scopes under $3K |

| Curated network | Toptal, Arc.dev, Lemon.io | $60–$150/hr | You do | High | Senior IC you will manage directly |

| AI-matched managed | Turing, Andela, Revelo | $35–$100/hr | Shared | Medium-high | Fast-fill contract roles, 3–6 months |

| Staff augmentation | BairesDev, Howdy, Index.dev, Gigster | $40–$90/hr | You do (they place) | Medium-high | Scaling an existing in-house team |

| Product partner | MarsDevs, nCube, Qubit Labs, Soft Suave | $15–$50/hr | They do | High (outcome-based) | Shipping a whole AI MVP/product |

Marketplace fits when you have a 2-week scripting job, you can write the JD yourself, and you have the technical skill to review output. It does not fit for anything you would call "production AI." The quality variance is extreme.

Curated networks fit when you have an in-house tech lead who will run a senior IC as if they were an employee. Toptal and Arc vet hard, but they are selling you a person, not an outcome. You still own delivery risk.

AI-matched managed networks fit when you need a named senior engineer in under a week for a 3–6 month contract. Turing in particular has moved hard into AI-specific matching. You are paying for speed of fill and replacement guarantees.

Staff augmentation fits when you already have a functioning engineering team and you are adding heads. It is not a fit when you are a non-technical founder without an in-house tech lead, because staff augmentation and outsourcing play different games and you need the outsourcing side.

Product partners fit when you want an outcome shipped, not heads placed. You describe the problem; they bring BA, PM, QA, and devs; they deliver the product. This is where MarsDevs sits. Our minimum engagement is $5,000, cap is 4 MVPs and 4 SaaS projects per month, and the composition flexes across full-stack, mobile, DevOps, and AI specialists.

MarsDevs lived-experience note: most of the founders who come to us previously tried a marketplace or AI-matched network first. The most common failure mode was not bad code. It was scope drift with nobody owning delivery. A product partner's job is to own that.

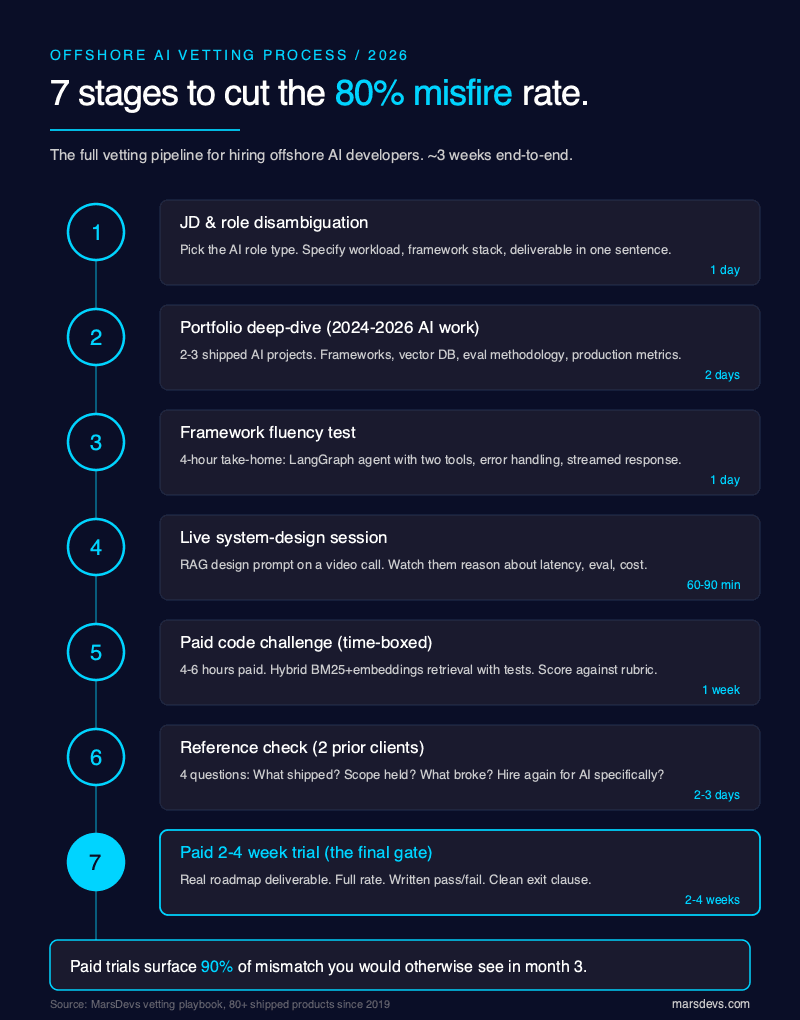

Vetting offshore AI developers correctly is the single highest-value decision in the hiring process. The 80% wrong-vendor rate from the groovyweb 2026 survey is not a talent problem. It is a filtering problem. Run this 7-stage process and the misfire rate drops to near zero. Skip any stage and you inherit the 80%.

Here is the process, in order.

Write the JD around the role type from section 2, not around a generic "AI developer" title. Specify the workload (RAG / agent / fine-tuning / AI-adjacent), the stack you expect (LangChain? LangGraph? vLLM?), and the deliverable in one sentence. Vendors who respond with a generic pitch without acknowledging the workload get filtered out at this stage.

Ask for 2–3 AI projects shipped in the last 24 months, with concrete detail: which frameworks, which vector DB, eval methodology, production metrics. "We helped a client with AI" is not a portfolio. "We built a RAG over 40K legal documents using LlamaIndex and Qdrant, reduced hallucination rate from 11% to 2.3% with a structured eval set of 500 questions" is a portfolio.

Send a take-home with a specific framework constraint. "Build a small LangGraph agent that uses two tools (web search and calculator), handle tool errors, and return a streamed response." Time-box to 4 hours. Review the code. You are looking for idiomatic framework use, not just working code.

For the framework landscape, see LangChain vs LlamaIndex: which your vendor should know and the LangGraph vs CrewAI vs AutoGen breakdown.

Invite them to a video call. Give them a prompt: "Design a RAG system for a 200-page employee handbook that needs to handle 500 queries per day, stay under $200/month in infra, and update weekly." Watch them reason. The red flag is a candidate who jumps to a framework before asking about latency, update frequency, or eval criteria.

Paid, scoped, 4–6 hours of work. Real deliverable. Pay for it. Example: "Build a Python function that retrieves the top 5 most relevant chunks from a 10MB corpus using hybrid search (BM25 + embeddings), return a structured JSON response, include 3 test cases." Review against a rubric (see trial scorecard in the next section).

Ask the vendor for 2 prior clients who ran AI projects. Ask those clients 4 specific questions: (1) What did they actually ship? (2) Did it stay in scope? (3) What broke in production and how was it handled? (4) Would you hire them again for an AI project specifically? A hire-them-again rate under 80% is a filter.

The final gate. Scope a small but real deliverable from your actual roadmap. Pay the trial rate. Define pass/fail before starting. We run this for every long-term engagement. It is the single highest-signal stage in the process.

Per a 2026 groovyweb survey, companies running paid trials see 78% lower 6-month turnover and 42% higher satisfaction. Take that number with a caveat: it is vendor-published. The direction is right, the precision is not gospel. Our own data at MarsDevs says the same thing. Trials surface 90% of the mismatch you would otherwise discover in month 3.

A candidate who cannot answer 7 of these 10 with specificity is not an AI engineer. They are an AI-adjacent developer, which may be fine. Adjust the role, or adjust the rate.

A paid trial for an offshore AI developer is a 2–4 week engagement with a real deliverable, a fixed rate, a pass/fail scorecard, and a clean exit clause. It is not a free test. It is not a homework assignment. It is a miniature project. We have run dozens of these with new engineers and new vendors, and the pattern that predicts long-term fit is simple: do they ship something that works, on time, with communication hygiene that matches the promise?

Here is the trial template we use.

Score each category 1–5. Pass threshold is total ≥ 28/35.

| Category | What we score | Weight |

|---|---|---|

| Framework fluency | Idiomatic use of LangChain/LangGraph/LlamaIndex, no workarounds | 1–5 |

| Production hygiene | Tests, logging, error handling, observability | 1–5 |

| Eval discipline | Wrote eval set, measured accuracy, iterated on failures | 1–5 |

| Cost awareness | Knows model costs, picked the right tier, cached where sensible | 1–5 |

| Communication | Daily async updates, raised blockers early, demo quality | 1–5 |

| On-time delivery | Hit the agreed milestones within the scope | 1–5 |

| Code review response | Took feedback well, iterated fast, did not argue for argument's sake | 1–5 |

The trial contract should include three clean exits: (1) we pay in full, keep the code, move to long-term engagement; (2) we pay in full, keep the code, do not continue, no hard feelings; (3) we terminate early with pro-rata payment if communication breaks down or scope is missed by week 1.

MarsDevs lived-experience note: we cap at 4 MVPs per month and offer a minimum $5,000 engagement. Below that, a freelancer serves you better. A trial inside our engagement starts at week 1 of the SOW. If the week 2 check-in does not hit agreed milestones, we restructure at no cost to the founder. That honesty is why our retention rate across 80+ shipped products is what it is.

An NDA is not enough for offshore AI work in 2026. NDAs stop confidential information from leaking. They do not assign ownership of what the offshore team creates. That is why most bad AI contracts end with a dispute over who owns the fine-tuned weights, the training dataset, or the prompt library. You need an IP Assignment Agreement, a DPA (Data Processing Agreement), and an MSA + SOW stack, with AI-specific clauses baked in.

Here is the 10-clause checklist we send every founder before they sign any offshore AI contract.

A generic software NDA says "don't tell anyone our secrets." An AI-specific contract has to also say: "Don't train on our data without permission. Don't retain model weights derived from our data. Don't send our data through a third-party LLM API without disclosure. Don't cache our prompts in a way we can't purge."

If your offshore vendor cannot tell you which third-party APIs your data traverses during their build (OpenAI? Anthropic? a proxy?), that is a disqualifier. In 2026, data flow mapping is table stakes.

Trust but verify. Ask for the paperwork before sharing any production data with any offshore AI vendor, including ours.

Nine patterns give away an offshore vendor who added "AI" to their LinkedIn overnight but cannot ship production AI. If you see 3 or more, walk away. If you see 6 or more, the vendor is a wrapper, not an engineering team.

MarsDevs lived-experience note: the 3-plus rule has never failed us in 3+ years of vetting offshore AI teams. The vendors we partner with on overflow capacity all pass all 9. Most marketplaces we tested fail 5 or more. You are choosing between a 4% failure rate and a 60% failure rate based on this list alone.

Three engagement structures dominate offshore AI work: dedicated team (T&M, monthly), fixed-bid (scope-locked, milestone-paid), and outcome-based (shipped-product, pay-on-delivery). Each has a distinct risk profile. Fixed-bid fits well-scoped MVPs. Dedicated team fits ongoing product work. Outcome-based fits founders who cannot review technical delivery and need the vendor to own risk.

Here is how to pick.

| Model | When to use | Who owns scope risk | Who owns delivery risk | Typical rate shape |

|---|---|---|---|---|

| Dedicated team (T&M) | Ongoing product, evolving scope, in-house tech lead | You | Shared | Monthly, $15–$25/hr |

| Fixed-bid | Well-defined MVP, known scope, short timeline | Vendor | Vendor | $5K–$50K milestone-paid |

| Outcome-based | Founder without tech lead, clear success metric | Vendor | Vendor | Higher total, lower ambiguity |

We ship 80+ products, across 12 countries, with 100+ engineers on staff, capped at 4 MVPs and 4 SaaS projects per month. Minimum engagement is $5,000. Team composition flexes across BA, PM, QA, full-stack, mobile, and DevOps, with AI specialists layered in for AI workloads. Most founders start on a fixed-bid 4–8 week MVP, then convert to a dedicated team for ongoing product work after ship. That path is what has kept our retention rate high across AI builds since 2023.

If you are still deciding whether to hire by role or by outcome, how to hire AI developers in general, regardless of geo has the role-level playbook.

Managing an offshore AI team is a communication-and-tooling problem, not a time-zone problem. Four disciplines separate teams that ship from teams that drift: async-by-default rituals, a tight tooling stack, AI-specific observability, and a clear escalation SLA. Time-zone overlap matters for 90 minutes per day. The other 22.5 hours are execution, and the tooling stack determines whether those hours produce output or chaos.

Here is the operating system we run with offshore AI teams.

Every offshore engagement needs an escalation SLA, in writing. Ours looks like this:

If your offshore vendor cannot commit to an SLA in writing, they are not ready for your production AI workload.

India-US overlap is 3–4 hours of productive time, typically 8am–12pm PT or 11am–3pm ET. LATAM-US overlap is 6–8 hours. Eastern Europe-US overlap is 4–6 hours at the edges of the workday. For daily standups and live reviews, 3–4 hours is enough. For pair programming, LATAM wins. For async execution velocity, India wins by shipping into your sleep cycle. We have delivered for US founders on India rhythm since 2019. The math holds.

Three scenarios make offshore AI hiring the wrong call: regulated greenfield training on live PHI/PII that legally cannot leave the US, sub-3-week throwaway spikes where ramp cost exceeds the engagement, and teams without any internal technical reviewer. Any of the three, and offshore is a trap. The fix is not "hire better offshore." The fix is to restructure the scope or the team first.

Here is the honest guidance.

If your project involves training a custom model on live protected health information or personally identifiable financial data that is contractually or regulatorily required to stay inside US borders, offshore fails on compliance before it fails on anything else. The legal cost of proving compliance exceeds the savings from offshore rates. Do this instead: hire a US-onshore specialist for the training phase, then transition maintenance offshore once the model is trained and synthetic/anonymized data is used.

If your engagement is under 3 weeks and the deliverable is throwaway (proof-of-concept that will never ship), offshore ramp cost eats the budget. Vendor onboarding, context transfer, and tooling setup typically consume 3–5 days. Do this instead: hire a marketplace freelancer for under-3-week scopes, or use an in-house engineer's side cycle.

If you are a non-technical founder with no in-house tech lead and no fractional CTO, offshore hiring is a coin flip. You cannot evaluate quality, you cannot run the vetting process, and you cannot catch scope drift in time. Do this instead: hire a fractional CTO first (2–8 hours per week) or engage a product partner that brings their own PM/BA/QA. A product partner owning delivery closes the gap. A dedicated IC under your management does not, if you cannot manage them.

These are the three carve-outs. For every other case, offshore AI works, and the top of this playbook tells you how.

Offshore AI developers cost $15–$25/hr through a senior India-based partner like MarsDevs and $18–$65/hr across the broader offshore market, vs $100–$180/hr for US-onshore equivalents. A full AI MVP lands at $5,000–$30,000 offshore in 3–12 weeks.

Curated platforms like Toptal and Turing can place a named senior AI engineer in 3–5 days. A full vetted hire including references and a paid trial takes 2–3 weeks. A product-partner team ramp from signed SOW to first sprint runs 1–2 weeks at MarsDevs.

Look for 2024–2026 shipped AI work with concrete metrics, named framework experience (LangChain, LangGraph, LlamaIndex, vLLM), a live system-design session, and a paid 2–4 week trial with written pass/fail criteria. Portfolio without production metrics is a red flag.

Yes, with an IP Assignment Agreement (not just an NDA), a DPA for any PII, a subcontracting-prohibited clause, and data-residency commitments for GDPR, HIPAA, or DPDP-covered workloads. Verify SOC 2 Type II or ISO 27001 before sharing production data.

India wins on talent pool and cost, with 420,000+ AI and data-science professionals and MarsDevs rates at $15–$25/hr. Poland and Ukraine lead on ML research pedigree. Argentina, Brazil, and Mexico win on US time-zone overlap. Pick on workload and overlap need.

Staff augmentation places individual contractors under your direct management; you run standups, reviews, and delivery. Offshore product partners deliver outcomes with their own BA, PM, QA, and engineers. Non-technical founders usually need the product-partner model.

Ask for eval sets, hallucination metrics, observability tooling (Langfuse, LangSmith), vector-DB choice rationale, and at least one non-OpenAI shipping example. If they cannot answer 3 of those 5 with specificity, they are a GPT-wrapper, not an AI engineering team.

MarsDevs minimum is $5,000. Turing starts hourly with no minimum. Toptal typically operates in 2-week increments. Scopes under $5,000 are usually better served by a freelancer than by any team engagement, because ramp overhead eats the budget.

Yes, if the vendor holds SOC 2 Type II, signs a BAA for HIPAA workloads, commits to a QSA-verified chain for PCI, and keeps data in approved regions. Verify current audit reports before sharing any regulated data.

Use Toptal for senior individual contributors you will manage. Use Turing for fast-fill contract roles. Use a product partner like MarsDevs when you want an outcome shipped with BA+PM+QA+devs under one roof, not just heads placed under your management.

You do not have 5 months and $47,000 to spend on the wrong offshore AI vendor. We have shipped 80+ products across 12 countries since 2019 at $15–$25/hr, with a 4-MVPs-per-month cap to keep delivery quality where it needs to be. Every AI MVP we have shipped since 2023 landed in the $5,000–$30,000 band, in 3–12 weeks.

If that is the shape of your project, we take on 4 new projects per month. Claim an engagement slot.

Published April 19, 2026. Author: Vishvajit Pathak, Co-Founder, MarsDevs. LinkedIn.

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "Hiring Offshore AI Developers in 2026: The Complete Playbook",

"description": "Cost, vetting, geo tradeoffs, IP clauses, and engagement models for hiring offshore AI developers in 2026. Pillar playbook from MarsDevs.",

"image": "https://marsdevs-strapi-media-s3-987.s3.us-east-1.amazonaws.com/blog/visuals/hiring-offshore-ai-developers/cover-image.png",

"datePublished": "2026-04-19T09:00:00+05:30",

"dateModified": "2026-04-19T09:00:00+05:30",

"author": {

"@type": "Person",

"name": "Vishvajit Pathak",

"url": "https://www.linkedin.com/in/vishvajitpathak",

"jobTitle": "Co-Founder, MarsDevs",

"sameAs": [

"https://www.linkedin.com/in/vishvajitpathak",

"https://www.marsdevs.com/about"

]

},

"publisher": {

"@type": "Organization",

"name": "MarsDevs",

"url": "https://www.marsdevs.com",

"logo": {

"@type": "ImageObject",

"url": "https://www.marsdevs.com/logo.png"

}

},

"mainEntityOfPage": {

"@type": "WebPage",

"@id": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers"

},

"keywords": "hire offshore AI developers, offshore AI development team, hire offshore AI engineers India, offshore AI developer rates 2026, offshore AI development cost, best countries to hire AI developers, offshore AI development company vs in-house, vet offshore AI developers, hire offshore machine learning engineers, offshore AI team India vs Eastern Europe vs LATAM"

}

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "How much does it cost to hire offshore AI developers in 2026?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Offshore AI developers cost $15–$25/hr through a senior India-based partner like MarsDevs and $18–$65/hr across the broader offshore market, vs $100–$180/hr for US-onshore equivalents. A full AI MVP lands at $5,000–$30,000 offshore in 3–12 weeks."

}

},

{

"@type": "Question",

"name": "How long does it take to hire an offshore AI developer?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Curated platforms like Toptal and Turing can place a named senior AI engineer in 3–5 days. A full vetted hire including references and a paid trial takes 2–3 weeks. A product-partner team ramp from signed SOW to first sprint runs 1–2 weeks at MarsDevs."

}

},

{

"@type": "Question",

"name": "What should I look for when vetting offshore AI developers?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Look for 2024–2026 shipped AI work with concrete metrics, named framework experience (LangChain, LangGraph, LlamaIndex, vLLM), a live system-design session, and a paid 2–4 week trial with written pass/fail criteria. Portfolio without production metrics is a red flag."

}

},

{

"@type": "Question",

"name": "Is it safe to share my training data with an offshore AI team?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Yes, with an IP Assignment Agreement (not just an NDA), a DPA for any PII, a subcontracting-prohibited clause, and data-residency commitments for GDPR, HIPAA, or DPDP-covered workloads. Verify SOC 2 Type II or ISO 27001 before sharing production data."

}

},

{

"@type": "Question",

"name": "Which country is best for hiring offshore AI developers?",

"acceptedAnswer": {

"@type": "Answer",

"text": "India wins on talent pool and cost, with 420,000+ AI and data-science professionals and MarsDevs rates at $15–$25/hr. Poland and Ukraine lead on ML research pedigree. Argentina, Brazil, and Mexico win on US time-zone overlap. Pick on workload and overlap need."

}

},

{

"@type": "Question",

"name": "What's the difference between offshore AI developers and staff augmentation?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Staff augmentation places individual contractors under your direct management; you run standups, reviews, and delivery. Offshore product partners deliver outcomes with their own BA, PM, QA, and engineers. Non-technical founders usually need the product-partner model."

}

},

{

"@type": "Question",

"name": "How do I know if an offshore vendor actually does AI or just wraps GPT?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Ask for eval sets, hallucination metrics, observability tooling (Langfuse, LangSmith), vector-DB choice rationale, and at least one non-OpenAI shipping example. If they cannot answer 3 of those 5 with specificity, they are a GPT-wrapper, not an AI engineering team."

}

},

{

"@type": "Question",

"name": "What's the minimum engagement to hire an offshore AI team?",

"acceptedAnswer": {

"@type": "Answer",

"text": "MarsDevs minimum is $5,000. Turing starts hourly with no minimum. Toptal typically operates in 2-week increments. Scopes under $5,000 are usually better served by a freelancer than by any team engagement, because ramp overhead eats the budget."

}

},

{

"@type": "Question",

"name": "Can offshore AI developers work with US-regulated data (HIPAA, PCI, SOC 2)?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Yes, if the vendor holds SOC 2 Type II, signs a BAA for HIPAA workloads, commits to a QSA-verified chain for PCI, and keeps data in approved regions. Verify current audit reports before sharing any regulated data."

}

},

{

"@type": "Question",

"name": "Should I use Toptal, Turing, or a product partner like MarsDevs?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Use Toptal for senior individual contributors you will manage. Use Turing for fast-fill contract roles. Use a product partner like MarsDevs when you want an outcome shipped with BA+PM+QA+devs under one roof, not just heads placed under your management."

}

}

]

}

{

"@context": "https://schema.org",

"@type": "Person",

"name": "Vishvajit Pathak",

"jobTitle": "Co-Founder",

"worksFor": {

"@type": "Organization",

"name": "MarsDevs",

"url": "https://www.marsdevs.com"

},

"url": "https://www.marsdevs.com/about",

"sameAs": [

"https://www.linkedin.com/in/vishvajitpathak",

"https://www.marsdevs.com/about"

],

"knowsAbout": [

"AI engineering",

"Offshore development",

"LangChain",

"LangGraph",

"RAG systems",

"Product engineering",

"Startup MVP development"

]

}

{

"@context": "https://schema.org",

"@type": "HowTo",

"name": "How to vet offshore AI developers: 7-stage hiring process",

"description": "A 7-stage vetting process for hiring offshore AI developers in 2026 that cuts the 80% misfire rate to near zero.",

"totalTime": "P4W",

"estimatedCost": {

"@type": "MonetaryAmount",

"currency": "USD",

"value": "5000-30000"

},

"step": [

{

"@type": "HowToStep",

"position": 1,

"name": "Job description and role disambiguation",

"text": "Write the JD around a specific AI role type (LLM/RAG engineer, AI agent engineer, ML engineer, data scientist, or AI-adjacent full-stack). Specify the workload, the expected stack (LangChain, LangGraph, vLLM), and the deliverable in one sentence.",

"url": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers#stage-1"

},

{

"@type": "HowToStep",

"position": 2,

"name": "Portfolio deep-dive on 2024–2026 AI work",

"text": "Ask for 2–3 AI projects shipped in the last 24 months with concrete detail: frameworks used, vector DB, eval methodology, and production metrics.",

"url": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers#stage-2"

},

{

"@type": "HowToStep",

"position": 3,

"name": "Framework fluency test",

"text": "Send a take-home with a specific framework constraint (for example, build a small LangGraph agent with two tools, error handling, and streamed response). Time-box to 4 hours.",

"url": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers#stage-3"

},

{

"@type": "HowToStep",

"position": 4,

"name": "Live system-design session",

"text": "Run a 60–90 minute video session with a system-design prompt (for example, design a RAG system for a 200-page handbook, 500 queries/day, under $200/month). Watch how they reason about latency, update frequency, and eval criteria.",

"url": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers#stage-4"

},

{

"@type": "HowToStep",

"position": 5,

"name": "Paid code challenge with time-box",

"text": "Scope a paid 4–6 hour deliverable with a real problem (for example, hybrid BM25+embeddings retrieval returning structured JSON with 3 test cases). Review against the trial scorecard.",

"url": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers#stage-5"

},

{

"@type": "HowToStep",

"position": 6,

"name": "Reference check with 2 prior clients",

"text": "Contact 2 prior AI-project clients. Ask what shipped, whether scope held, what broke in production, and whether they would hire the vendor again for AI work. Under 80% hire-again rate is a filter.",

"url": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers#stage-6"

},

{

"@type": "HowToStep",

"position": 7,

"name": "Paid 2–4 week trial with acceptance criteria",

"text": "Scope a real roadmap deliverable. Pay the full trial rate. Define pass/fail before starting (for example, eval accuracy ≥ 85%, P95 latency ≤ 2s, zero hallucinations on smoke test). Trials surface 90% of mismatches that would otherwise appear in month 3.",

"url": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers#stage-7"

}

]

}

{

"@context": "https://schema.org",

"@type": "BreadcrumbList",

"itemListElement": [

{

"@type": "ListItem",

"position": 1,

"name": "Home",

"item": "https://www.marsdevs.com"

},

{

"@type": "ListItem",

"position": 2,

"name": "Blog",

"item": "https://www.marsdevs.com/blog"

},

{

"@type": "ListItem",

"position": 3,

"name": "Hiring Offshore AI Developers in 2026",

"item": "https://www.marsdevs.com/blog/hiring-offshore-ai-developers"

}

]

}

Co-Founder, MarsDevs

Vishvajit started MarsDevs in 2019 to help founders turn ideas into production-grade software. With deep expertise in AI, cloud architecture, and product engineering, he has led the delivery of 80+ software products for clients in 12+ countries.

Get more insights like this

Join founders and CTOs who receive our engineering insights weekly. No spam, just actionable technical content.